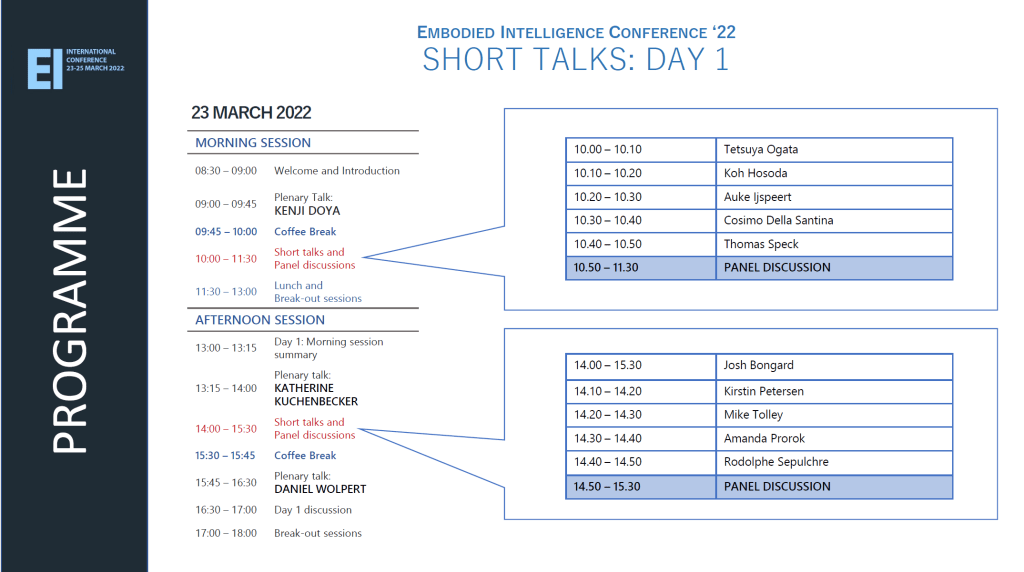

23 MARCH 2022

PLEASE NOTE THAT OWING TO COPYRIGHT OR INTELLECTUAL PROPERTY PERMISSIONS WE ARE UNABLE TO SHARE RECORDINGS OF SOME SESSIONS

VIDEO: INTRODUCTION AND WELCOME: Fumiya Iida (University of Cambridge)

VIDEO: DAY 1 MORNING SESSION SUMMARY: Josie Hughes (EPFL)

VIDEO: DAY 1 CLOSING PANEL DISCUSSION

PLENARY TALKS

KENJI DOYA (Okinawa Institute of Science and Technology)

VIDEO: EMBODIED AGENTS FOR SURVIVAL, REPRODUCTION, AND PREDICTION

Abstract: Reinforcement learning agents can acquire a variety of behaviors through exploration in the environment and reward feedback. Can artificial agents acquire their own reward functions? The reward systems in animals are shaped through evolution to satisfy survival and reproduction. We developed an embodied evolution framework in which reward functions and hyper parameters of learning are evolved. We further discuss how novel information can be an additional reward to allow agents to better predict the environment.

KATHERINE KUCHENBECKER (Max Plank Institute for Intelligent Systems)

VIDEO: HAPTIC INTELLIGENCE FOR HUMAN-ROBOT INTERACTION

Abstract: The sense of touch plays a crucial role in the sensorimotor systems of humans and animals. In contrast, today’s robotic systems rarely have any tactile sensing capabilities because artificial skin tends to be complex, bulky, rigid, delicate, unreliable, and/or expensive. To safely complete useful tasks in everyday human environments, robots should be able to feel contacts that occur across all of their body surfaces, not just at their fingertips. Furthermore, tactile sensors need to be soft to cushion contact and transmit loads, and their spatial and temporal resolutions should match the requirements of the task. We are thus working to create tactile sensors that provide useful contact information across different robot body parts. However, good tactile sensing is not enough: robots also need good social skills to work effectively with and around humans. I will elucidate these ideas by showcasing several systems we have created and evaluated in recent years, including Insight and HuggieBot.

DANIEL WOLPERT (Columbia University)

VIDEO: CONTEXTUAL INFERENCE UNDERLIES THE LEARNING OF SENSORIMOTOR REPERTOIRES

Abstract: Humans spend a lifetime learning, storing and refining a repertoire of motor memories. However, it is unknown what principle underlies the way our continuous stream of sensorimotor experience is segmented into separate memories and how we adapt and use this growing repertoire. Here we develop a principled theory of motor learning based on the key insight that memory creation, updating, and expression are all controlled by a single computation – contextual inference. Unlike dominant theories of single-context learning, our repertoire-learning model accounts for key features of motor learning that had no unified explanation and predicts novel phenomena, which we confirm experimentally. These results suggest that contextual inference is the key principle underlying how a diverse set of experiences is reflected in motor behavior.

MORNING SHORT TALKS (VIDEOS)

- Tetsuya Ogata: Deep Predictive Learning for Embodied Intelligence

- Koh Hosoda: Biomimetic Robotic Feet

- Auke Ijspeert: Exploring the interaction of feedforward and feedback control in the spinal cord using biorobots

- Cosimo Della Santina: Discovering, embedding, and exciting oscillatory intelligence in (soft) robots

- Thomas Speck: Embodied intelligence in plants: Does it exist? [Recording not possible]

- PANEL DISCUSSION