24 MARCH 2023

PLEASE NOTE THAT OWING TO COPYRIGHT OR INTELLECTUAL PROPERTY PERMISSIONS WE ARE UNABLE TO SHARE RECORDINGS OF SOME SESSIONS

VIDEO: DAY 1 & 2 SUMMARY: Arsen Abdulali (University of Cambridge)

VIDEO: CONFERENCE CLOSING REMARKS: Fumiya Iida (University of Cambridge)

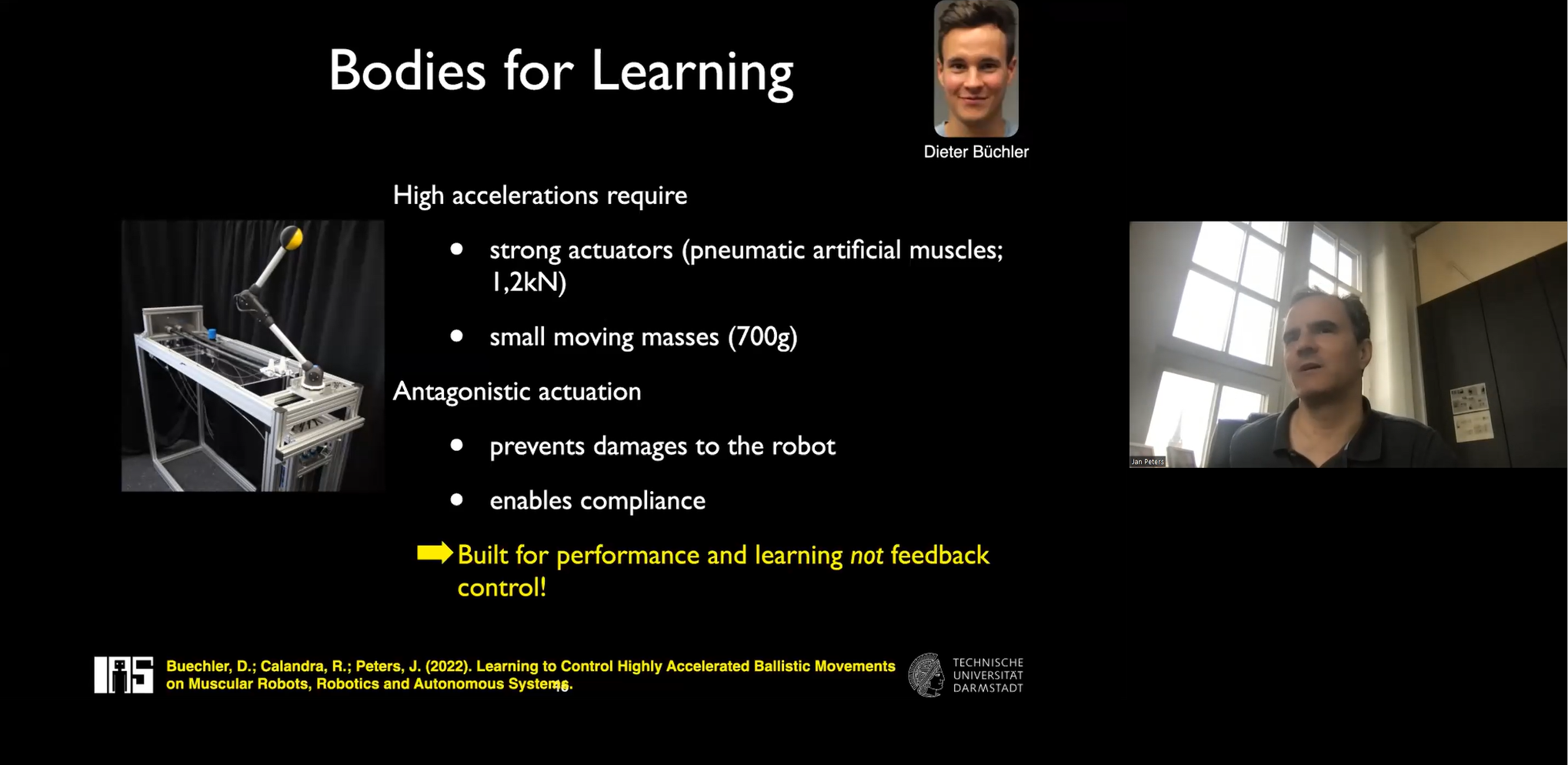

FINAL PLENARY TALK

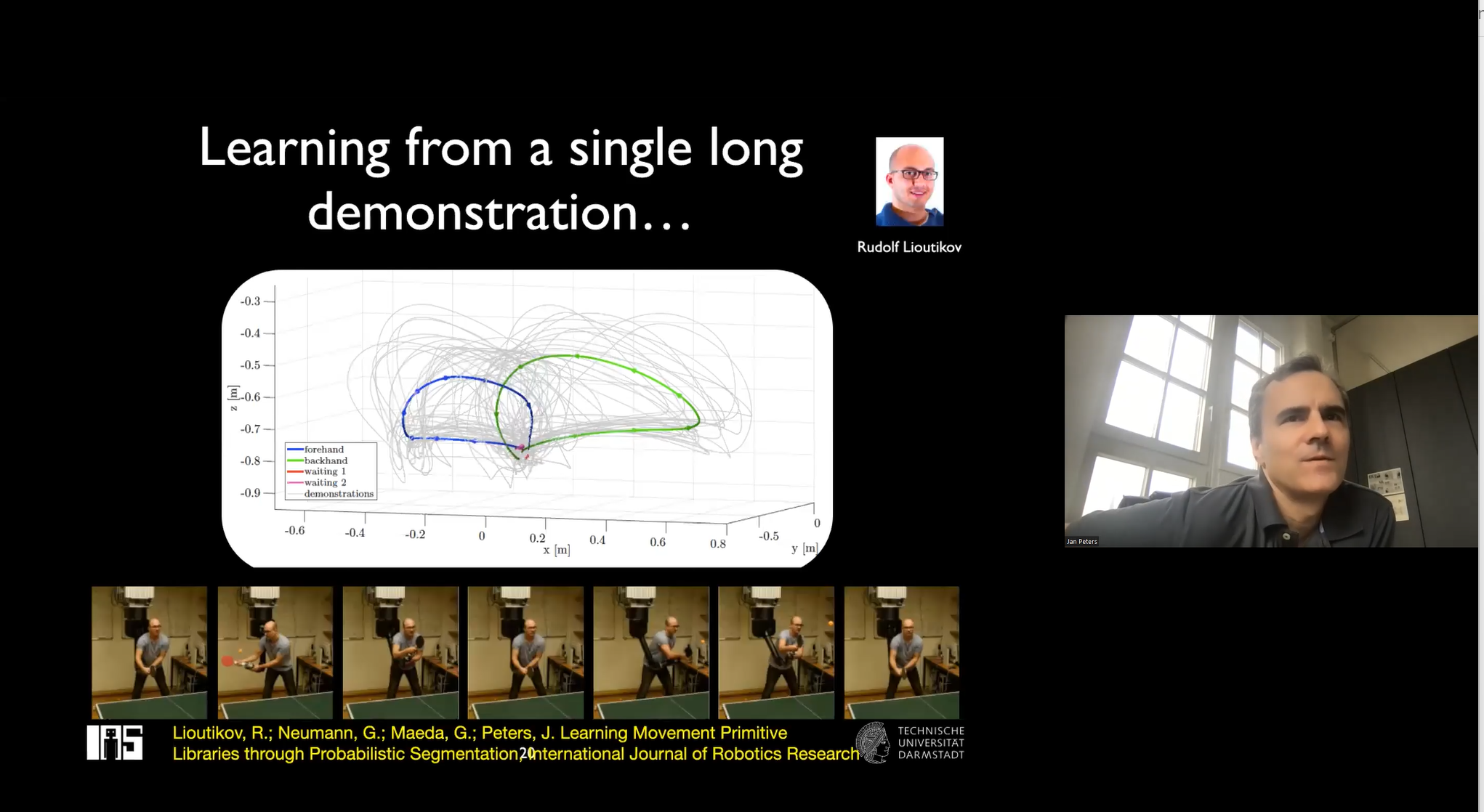

JAN PETERS (Technische Universität Darmstadt, Germany)

VIDEO: INDUCTIVE BIASES FOR ROBOT REINFORCEMENT LEARNING

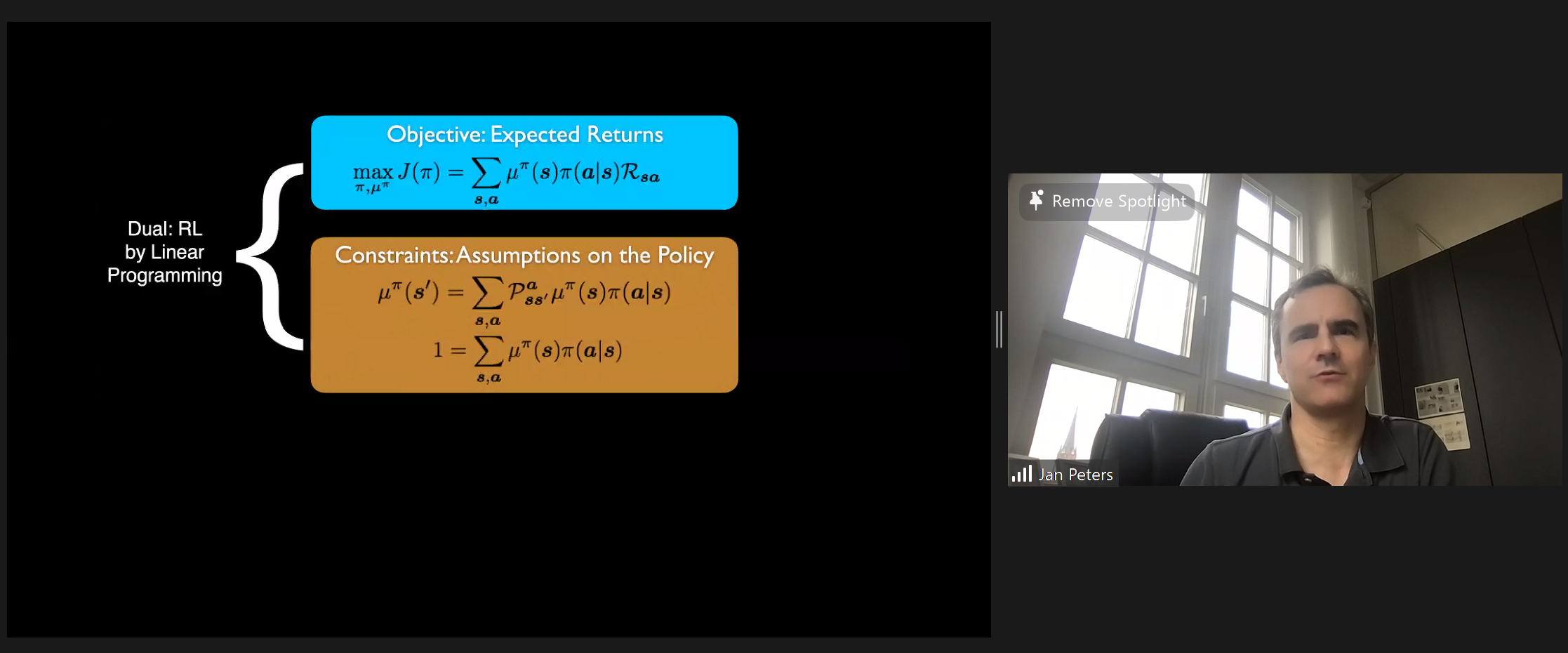

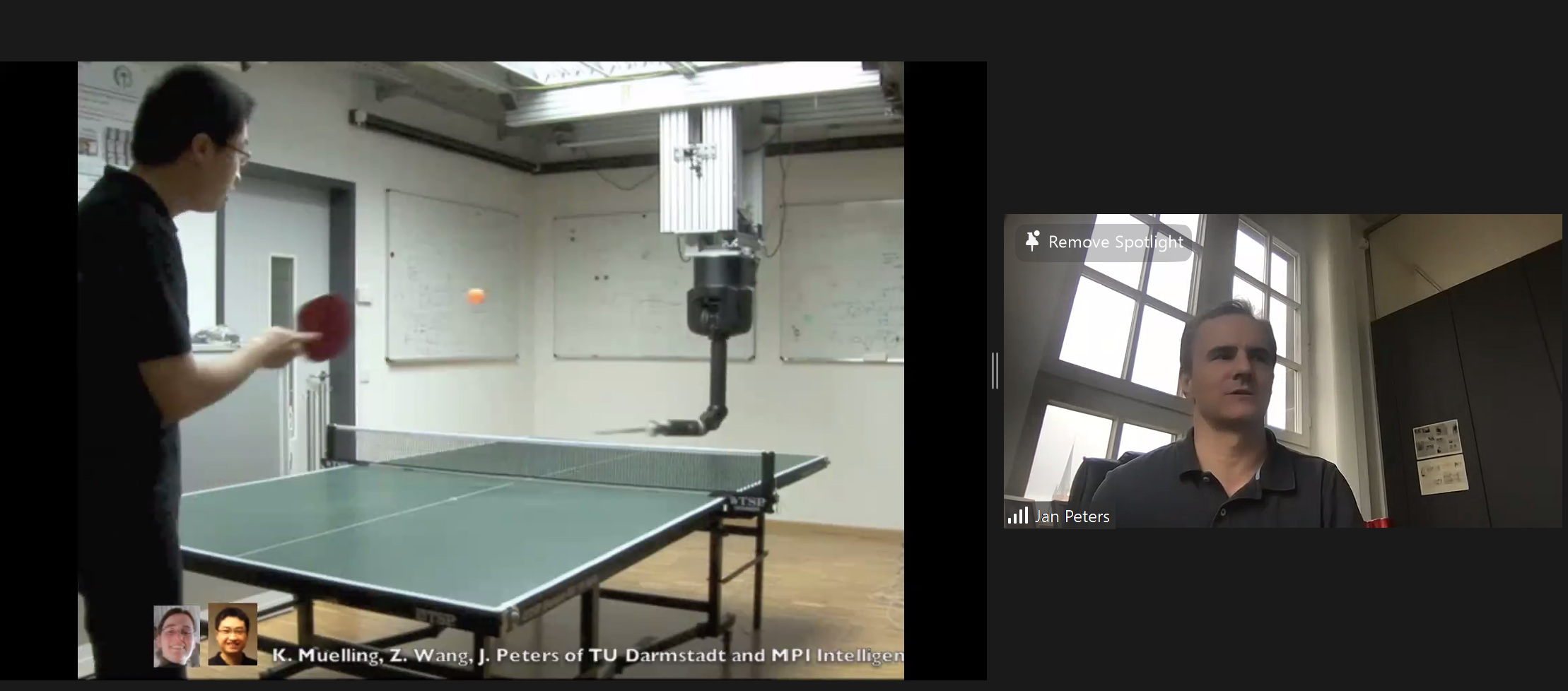

Abstract: Autonomous robots that can assist humans in situations of daily life have been a long standing vision of robotics, artificial intelligence, and cognitive sciences. A first step towards this goal is to create robots that can learn tasks triggered by environmental context or higher level instruction. However, learning techniques have yet to live up to this promise as only few methods manage to scale to high-dimensional manipulator or humanoid robots. In this talk, we investigate a general framework suitable for learning motor skills in robotics which is based on the principles behind many analytical robotics approaches. To accomplish robot reinforcement learning learning from just few trials, the learning system can no longer explore all learn-able solutions but has to prioritize one solution over others – independent of the observed data. Such prioritization requires explicit or implicit assumptions, often called ‘induction biases’ in machine learning. Extrapolation to new robot learning tasks requires induction biases deeply rooted in general principles and domain knowledge from robotics, physics and control. Empirical evaluations on a several robot systems illustrate the effectiveness and applicability to learning control on an anthropomorphic robot arm. These robot motor skills range from toy examples (e.g., paddling a ball, ball-in-a-cup) to playing robot table tennis, juggling and manipulation of various objects.

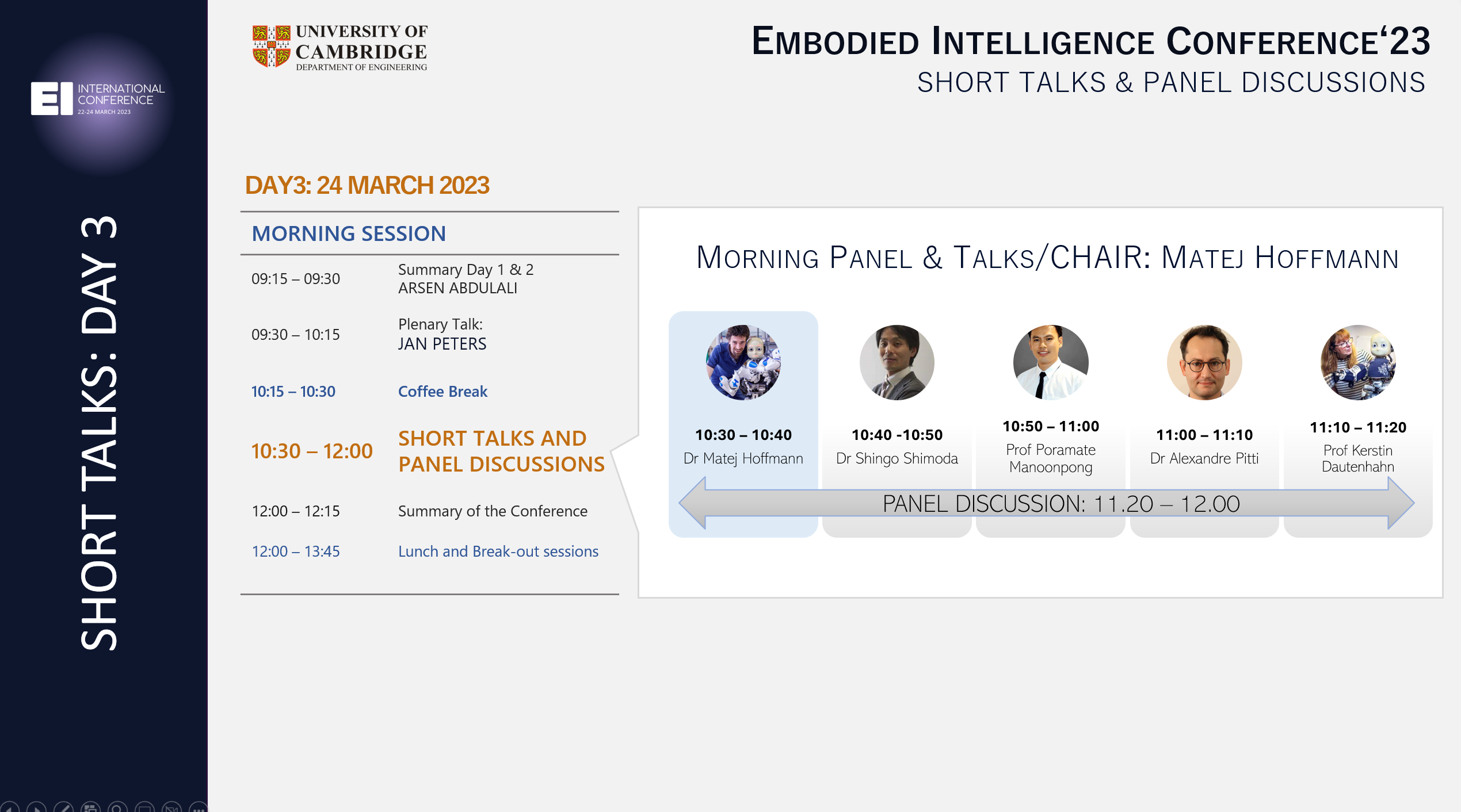

FINAL SHORT TALK SESSION (VIDEOS)

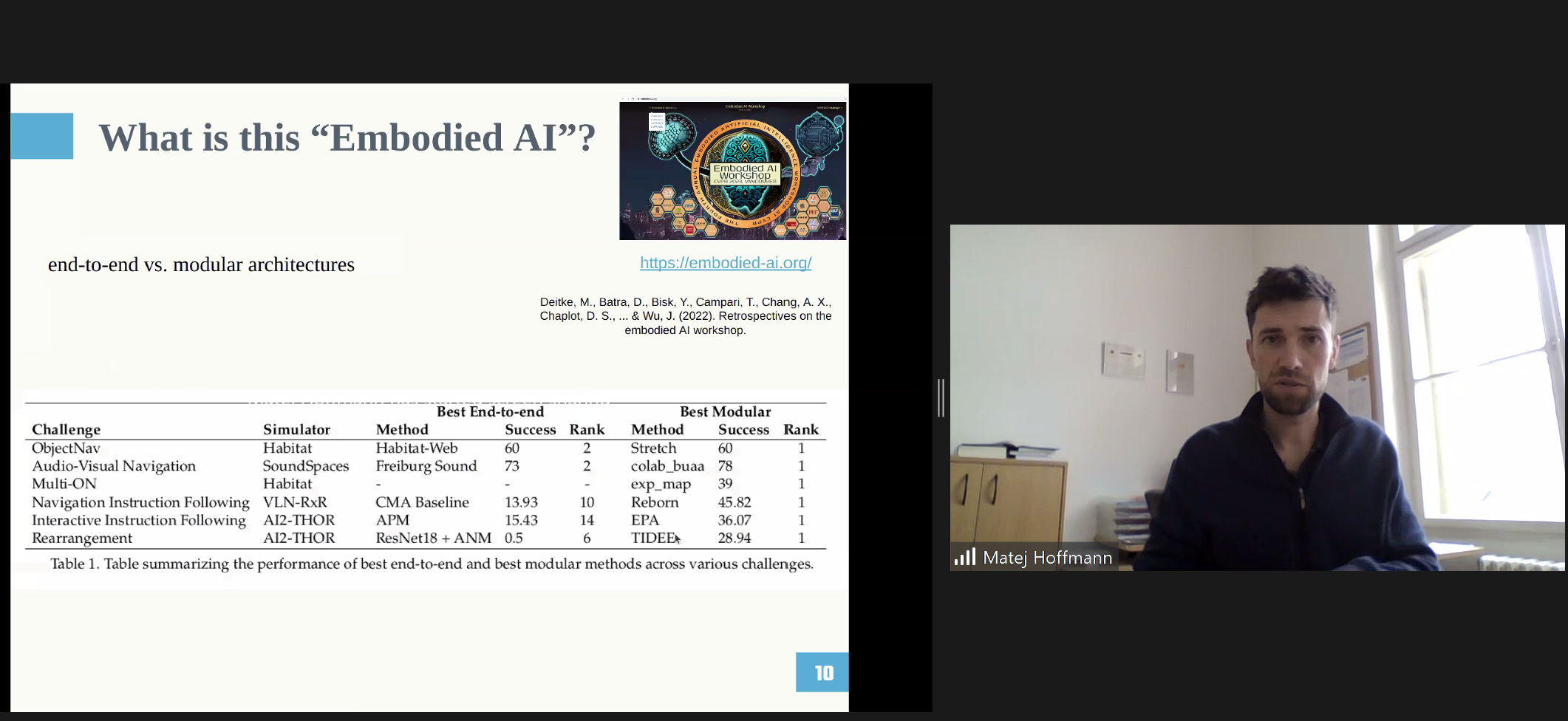

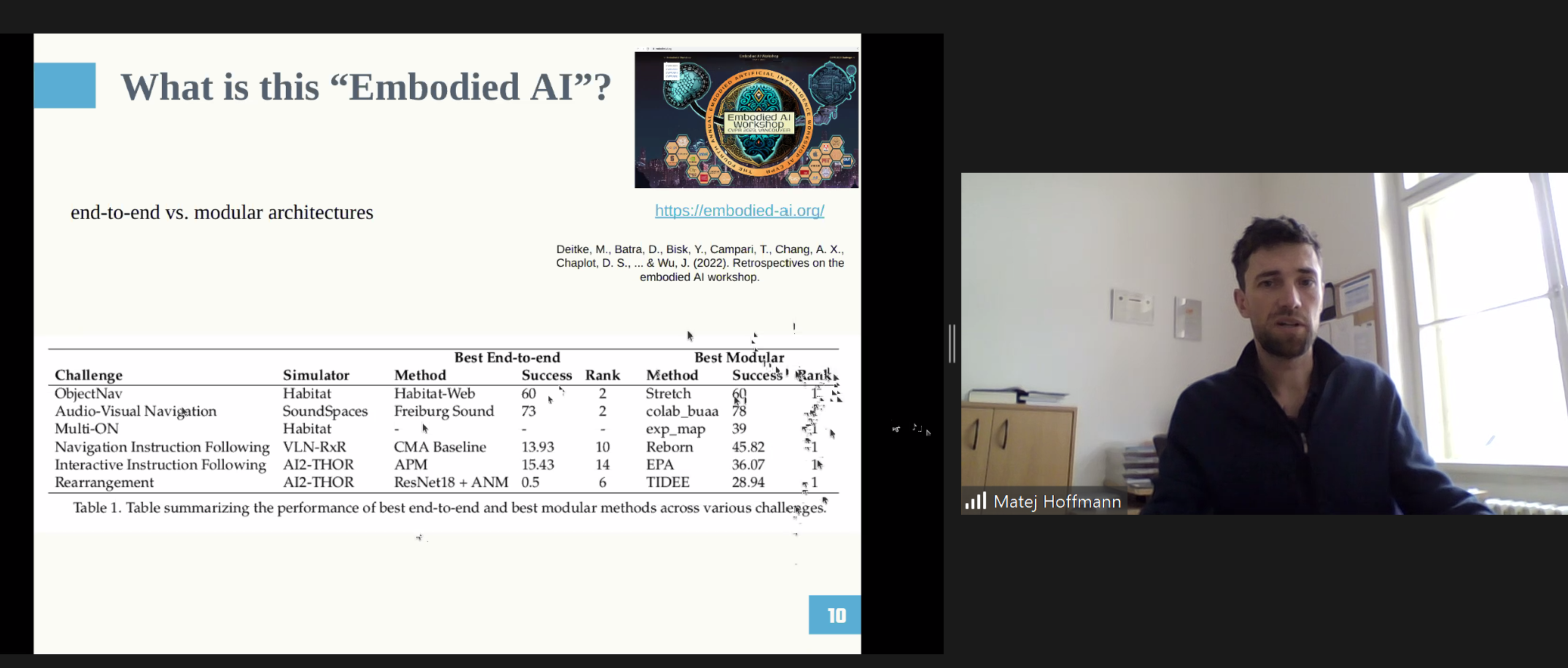

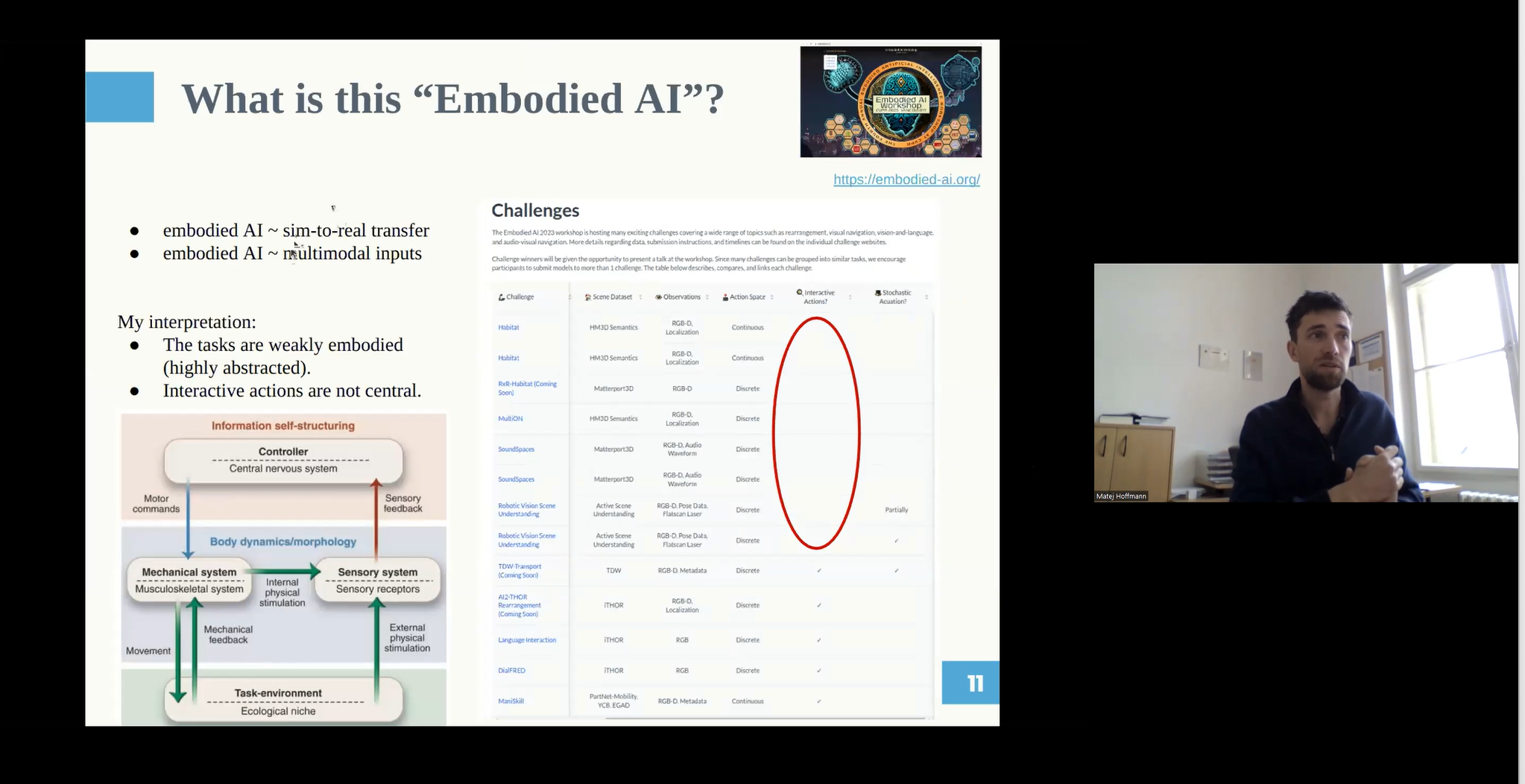

- Matej Hoffmann: Embodied AI adopted by the deep learning community – is it really embodied?

- Abstract: There is a growing interest in Embodied AI in the machine learning, deep learning, computer vision, and robotics communities (e.g., https://embodied-ai.org/, https://iros2022.org/, https://twitter.com/Embodied_AI). In this talk I will have a critical look at these developments and put them in the context of several decades of research in embodied intelligence, morphological computation, and soft robotics.

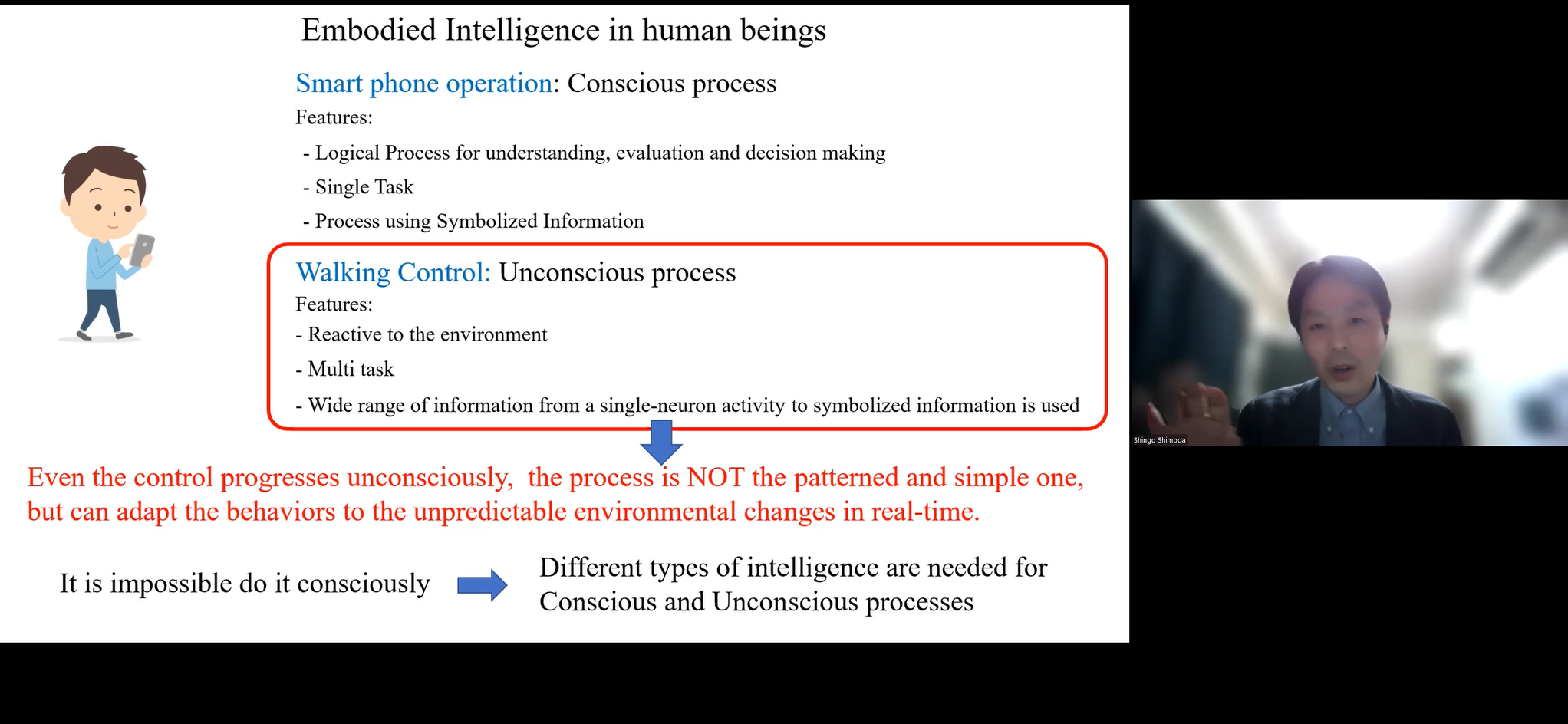

- Shingo Shimoda: Unconscious Intelligence embedded in Human beings

- Abstract: We often move around without much thought and reach for a cup of coffee on our desk while reading a book. Although these actions seem ordinary, they are not simply a combination of patterned movements. Our living environment is full of uncertainty. Even for simple actions such as walking, many factors cannot be accurately predicted or measured, such as the state of the ground surface. Real-time adaptation is critical to accomplish our goals in such an environment for our survival. Interesting point is that adaptation occurs mainly unconsciously, and we cannot consciously alter our behavior to adapt to unknown environments. This fact imply that a rich intelligence, which can be called ‘unconscious intelligence’, is present in unconscious system. Our entire intelligence is constructed with the “conscious intelligence” that progress in logical thinking. Humans have evolved significantly due to advances in conscious intelligence, while unconscious intelligence has seen little change from in less-evolved mammals. However, it has become clear that the over-advanced conscious intelligence has led to various problems resulting from an imbalance with unconscious intelligence. Is it possible to address this issue by supporting unconscious intelligence, which could not evolve naturally, with robots to eliminate these problems? In this presentation, we will discuss various examples.

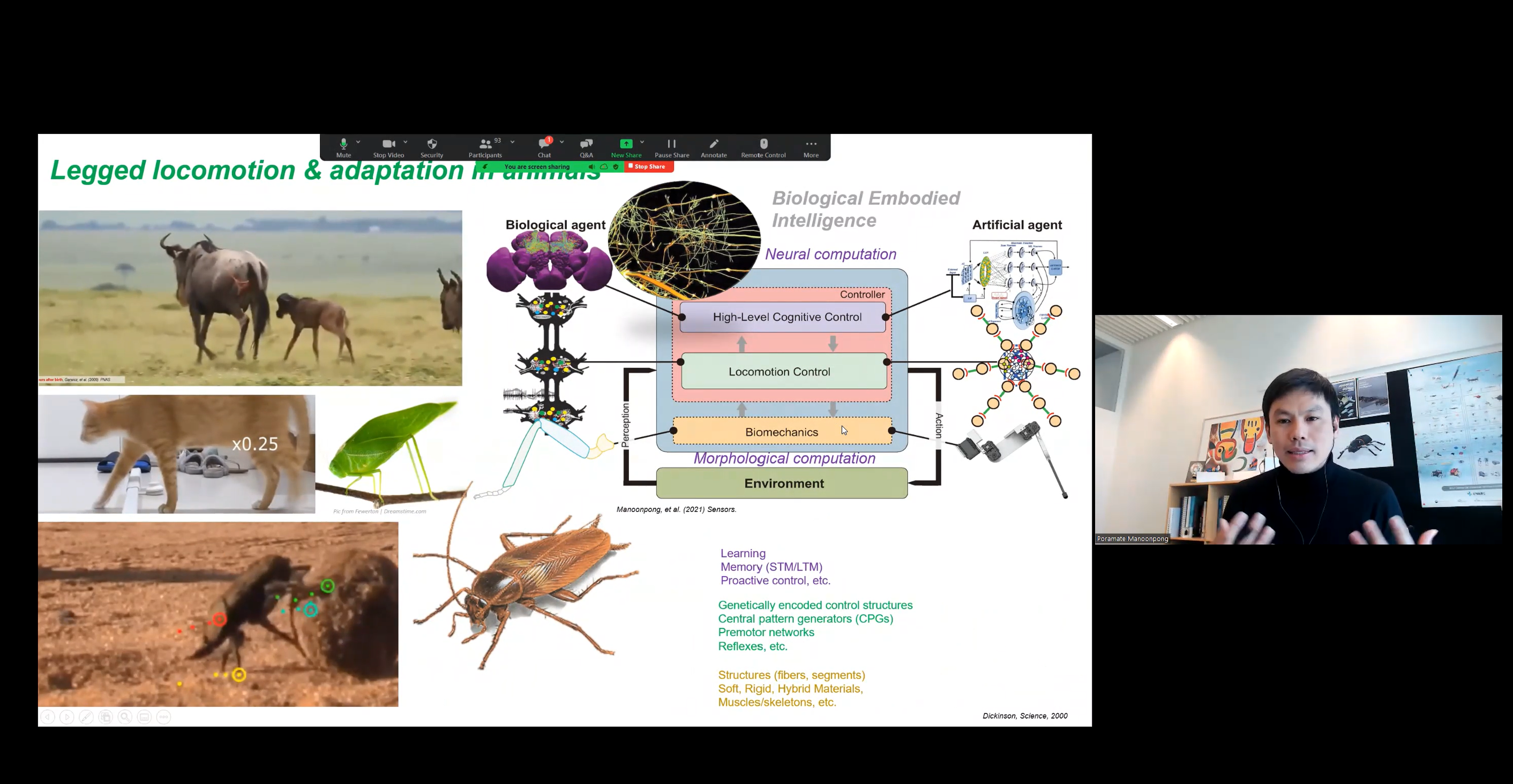

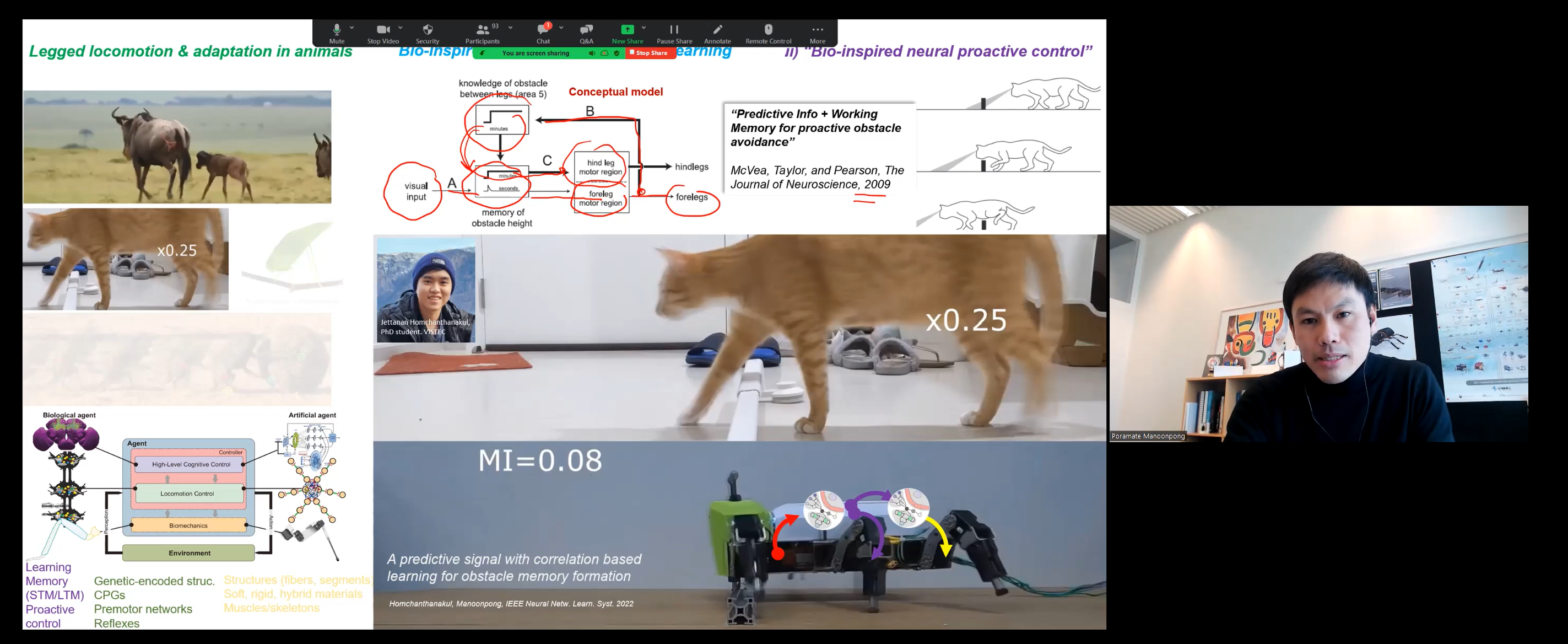

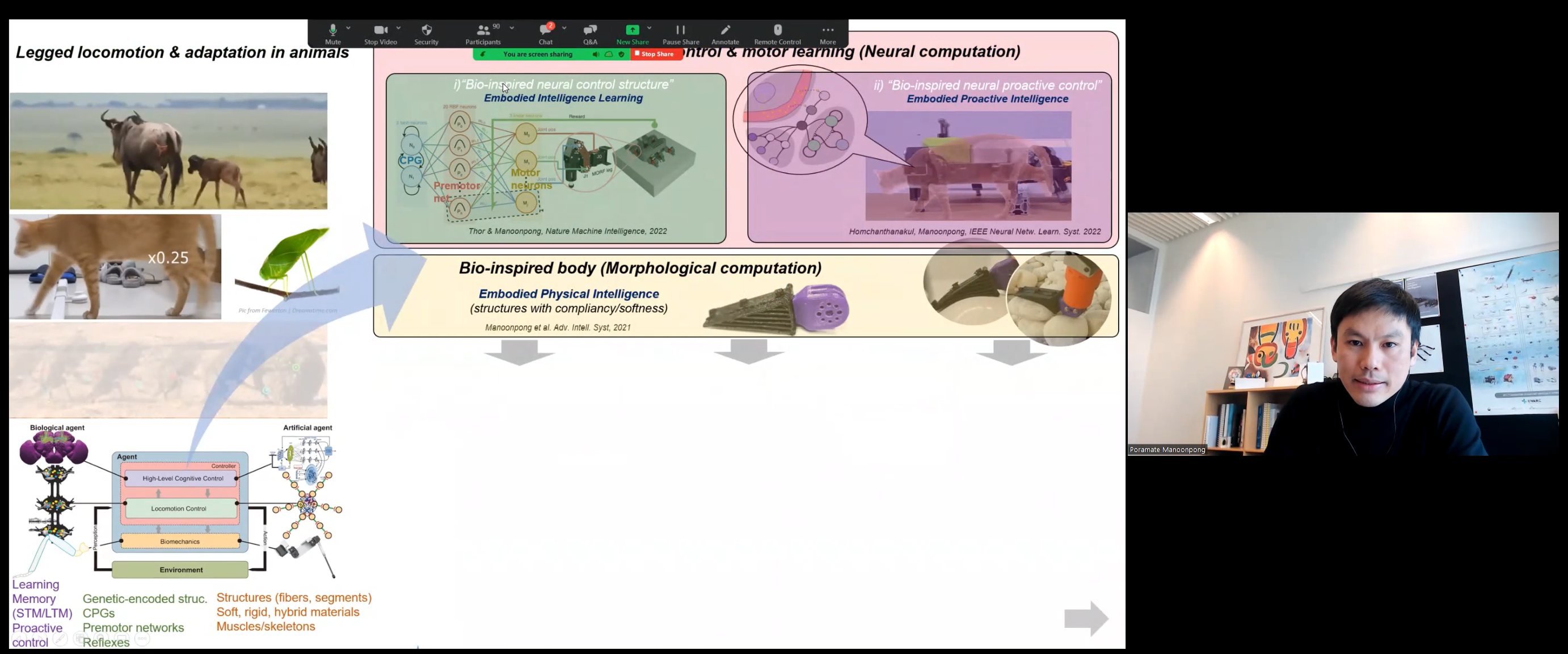

- Poramate Manoonpong: Nature-inspired Embodied Intelligence: From Neurons to Locomotion & Adaptation

- Abstract: Living creatures can quickly form their gaits within minutes of being born. This is due to their neural locomotion control circuits comprising genetically encoded. They can quickly adapt their movement to traverse a variety of substrates and even take proactive steps to avoid colliding with an obstacle. Biological studies reveal that the complex locomotion behavior is largely attained by several ingredients including neural control mechanisms with plasticity and memory, sensory feedback, and dynamic body-brain-environment interactions. In addition to their neural computation, their biomechanics (morphological computation) plays also an important role for robust behavior. In this talk, I will present “how we can realize these ingredients with embodied intelligence inspired by nature for complex machines (robots) so they can become more intelligent like their biological counterparts.

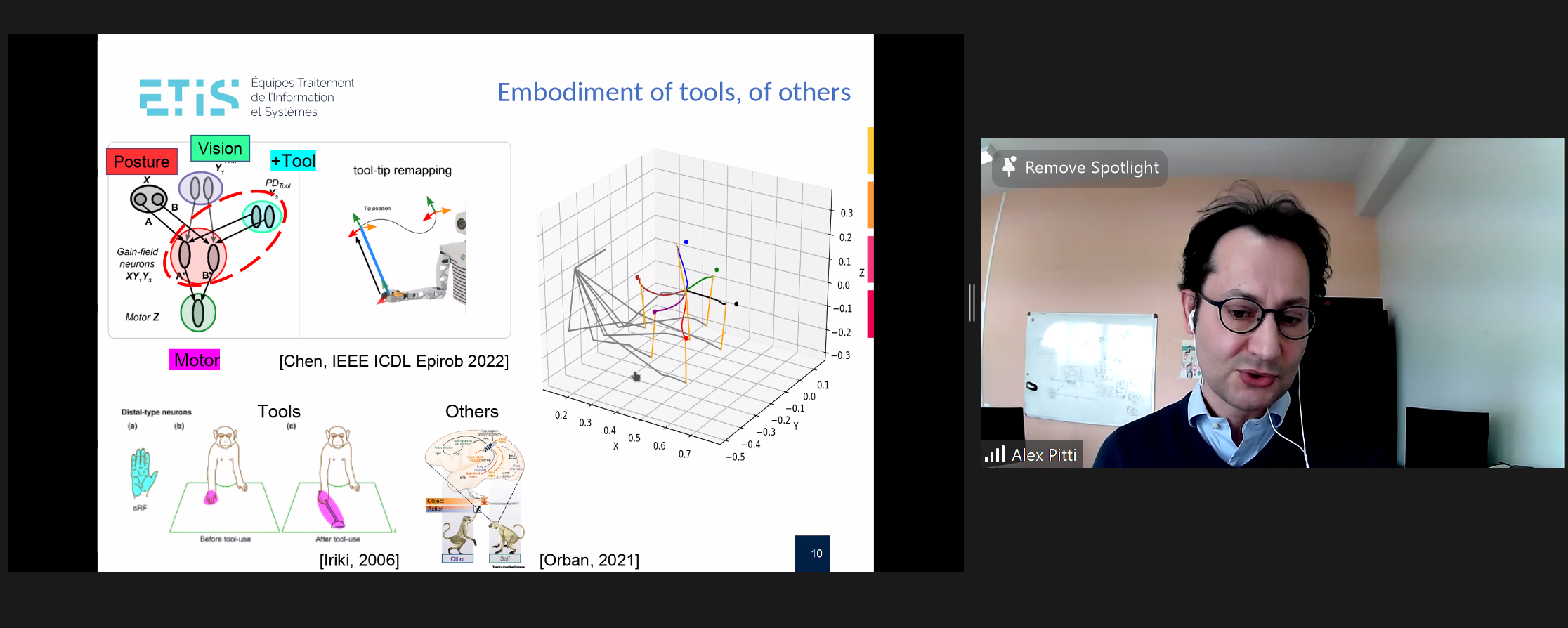

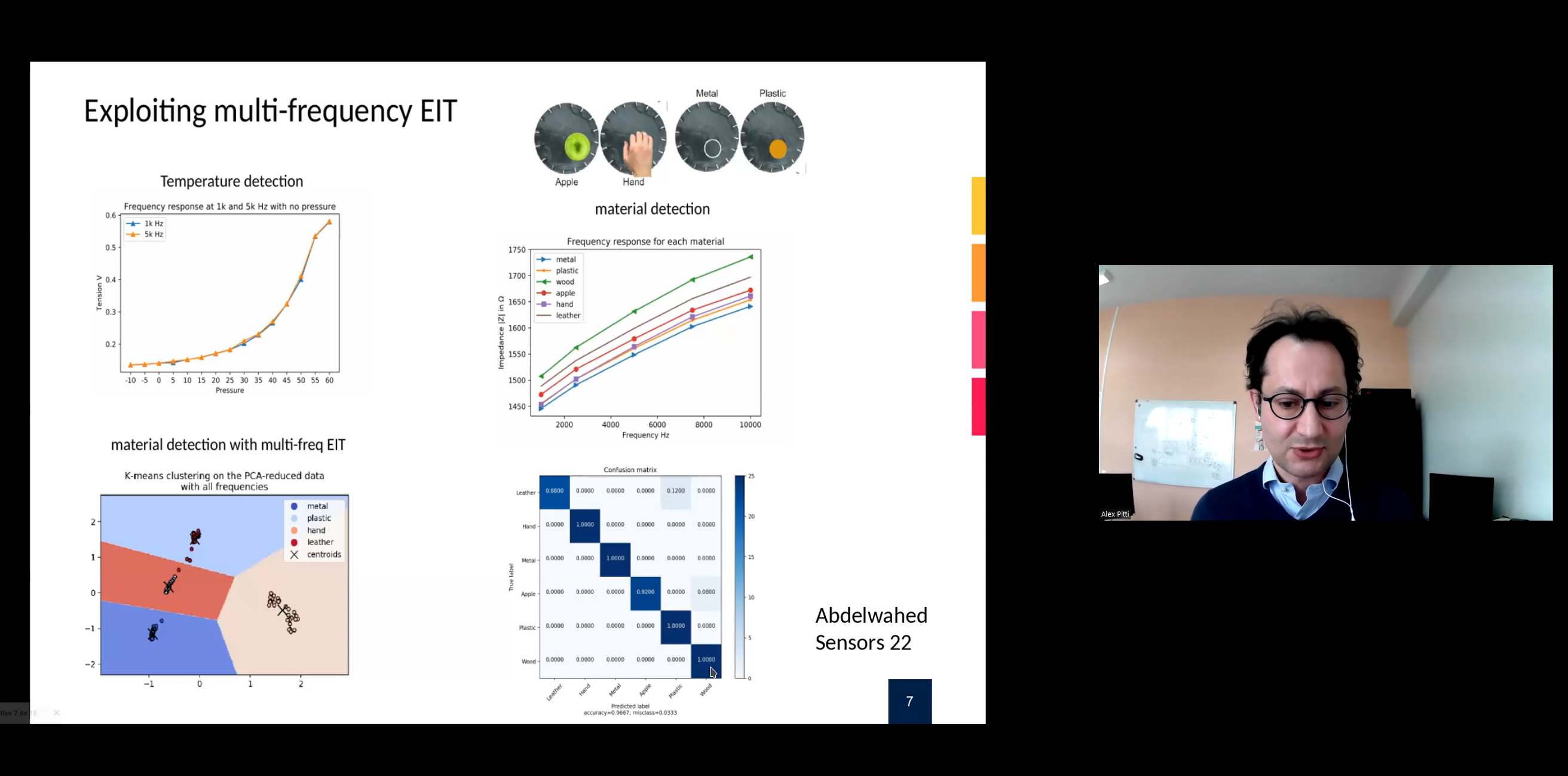

- Alexandre Pitti: Developing one artificial skin to recognize object materials and constructing the robot’s body image

- Abstract: Our skin is our first interface to interact with objects and others, it represents the physical limits of our own body. We developed a novel artificial skin to endow embodied intelligence skills in robots. It is based on the multi-frequency analysis of the Electrical Impedance Tomography (EIT). In comparison with conventional methods, this skin is capable to discriminate the material of objects in contact with: metal, skin, leather, plastic. Combined with visual and proprioceptive input, it is capable to represent the spatial limits of a robotic arm, its body image. Future works with this artificial skin will serve for robot social interaction and prosthesis

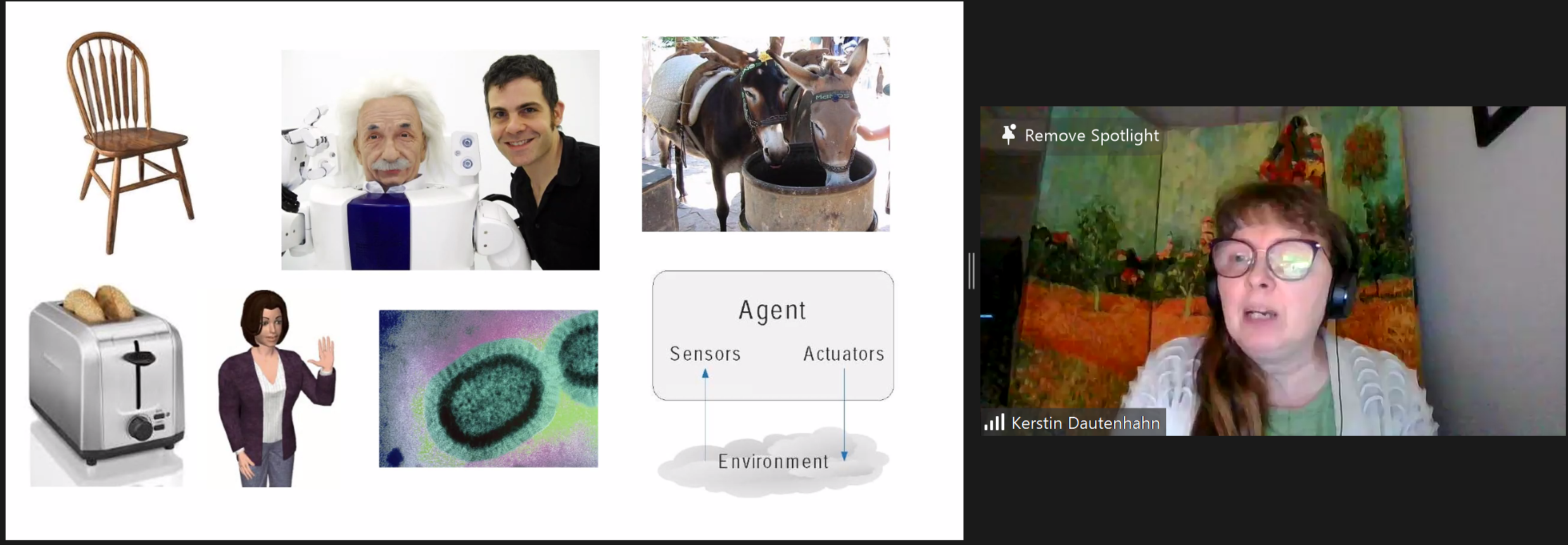

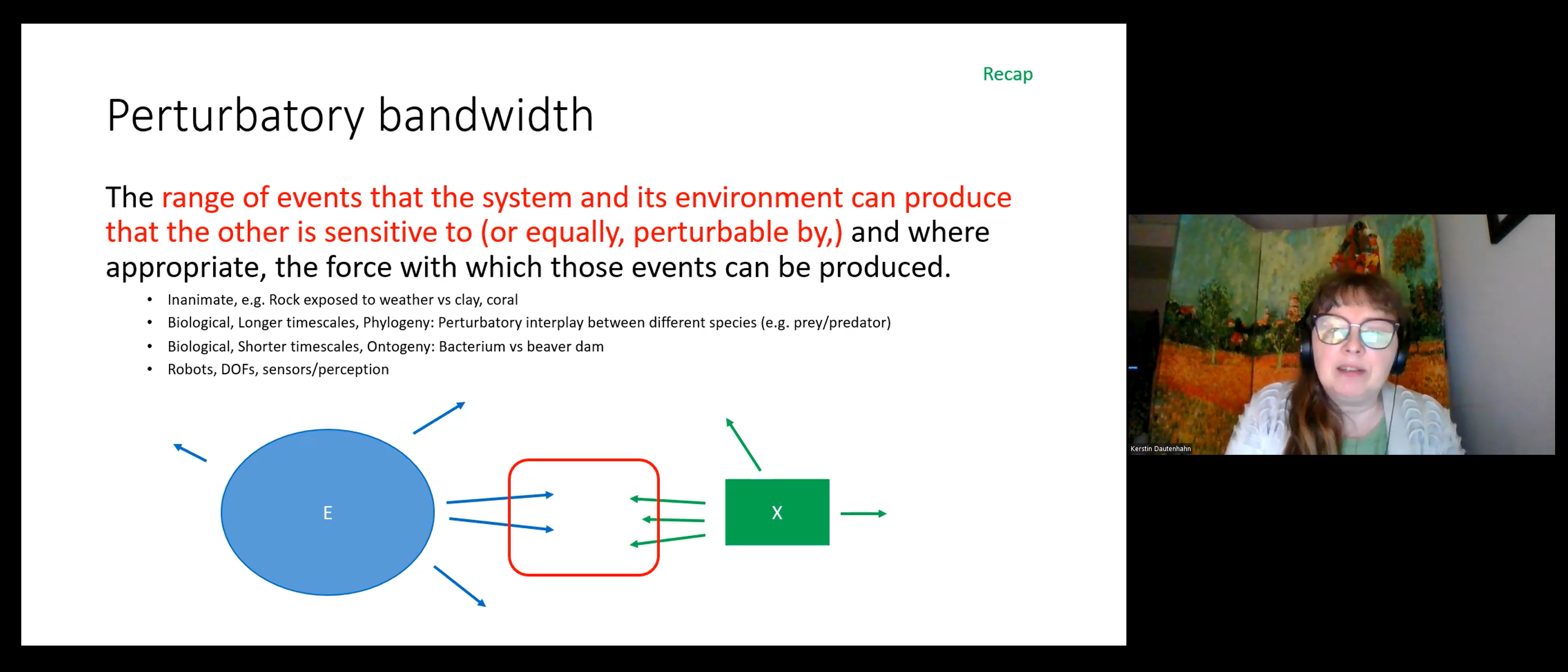

- Kerstin Dautenhahn: Reflections on embodiment in human-robot interaction studies and applications

- Abstract: My research in social robotics and human-robot interaction frequently touches upon the role of embodiment in (social) human-robot interaction. I’ve previously argued that there is a degree of embodiment, rather than a binary distinction. I will illustrate this approach with examples from human-robot interaction/social robotics research. Finally I will reflect on challenges regarding robot embodiment in real world applications

- PANEL DISCUSSION

CLICK ON IMAGES TO ENLARGE