22 MARCH 2023

PLEASE NOTE THAT OWING TO COPYRIGHT OR INTELLECTUAL PROPERTY PERMISSIONS WE ARE UNABLE TO SHARE RECORDINGS OF SOME SESSIONS

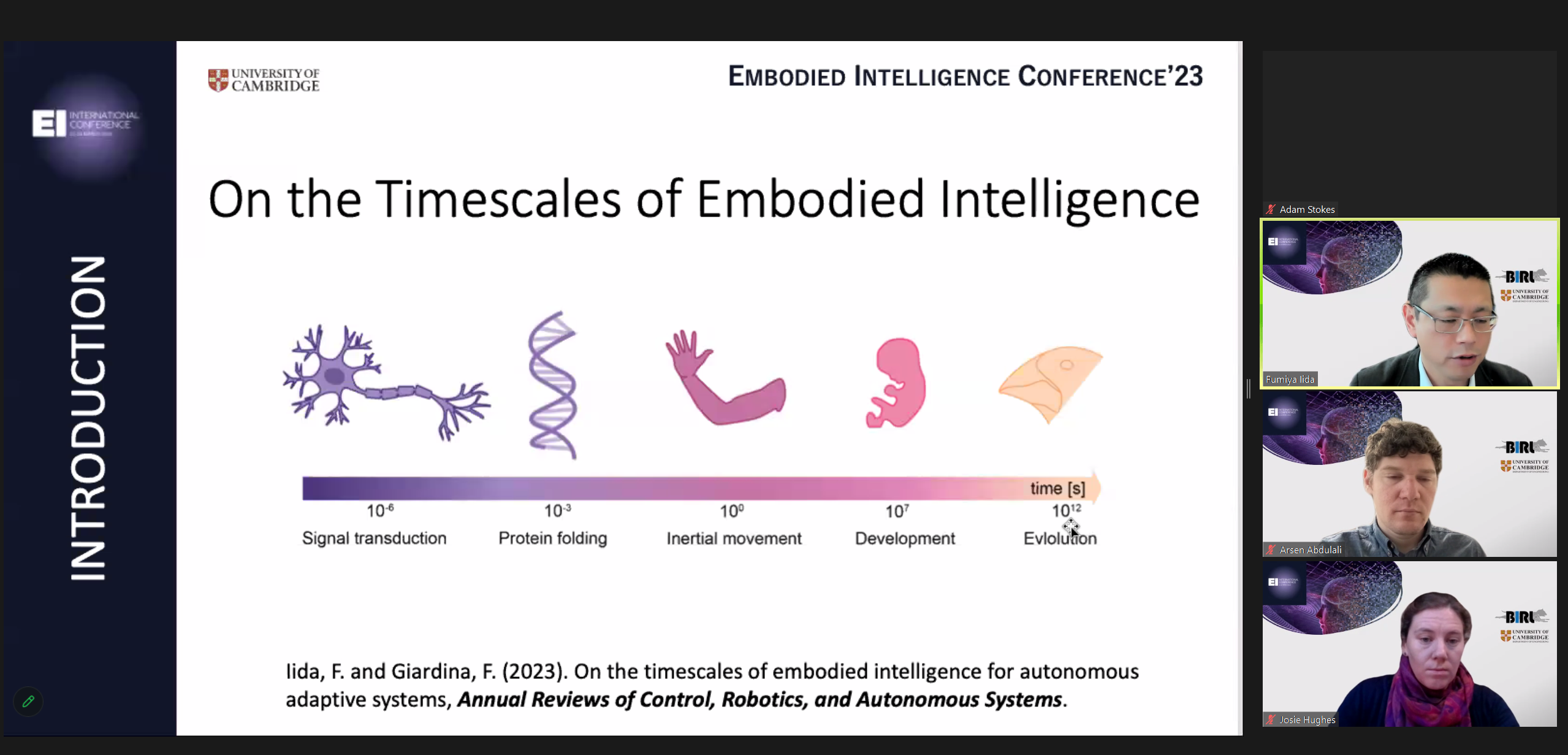

VIDEO: INTRODUCTION AND WELCOME: Fumiya Iida (University of Cambridge)

VIDEO: DAY 1 MORNING SESSION SUMMARY: Josie Hughes (EPFL)

VIDEO: DAY 1 CLOSING PANEL DISCUSSION

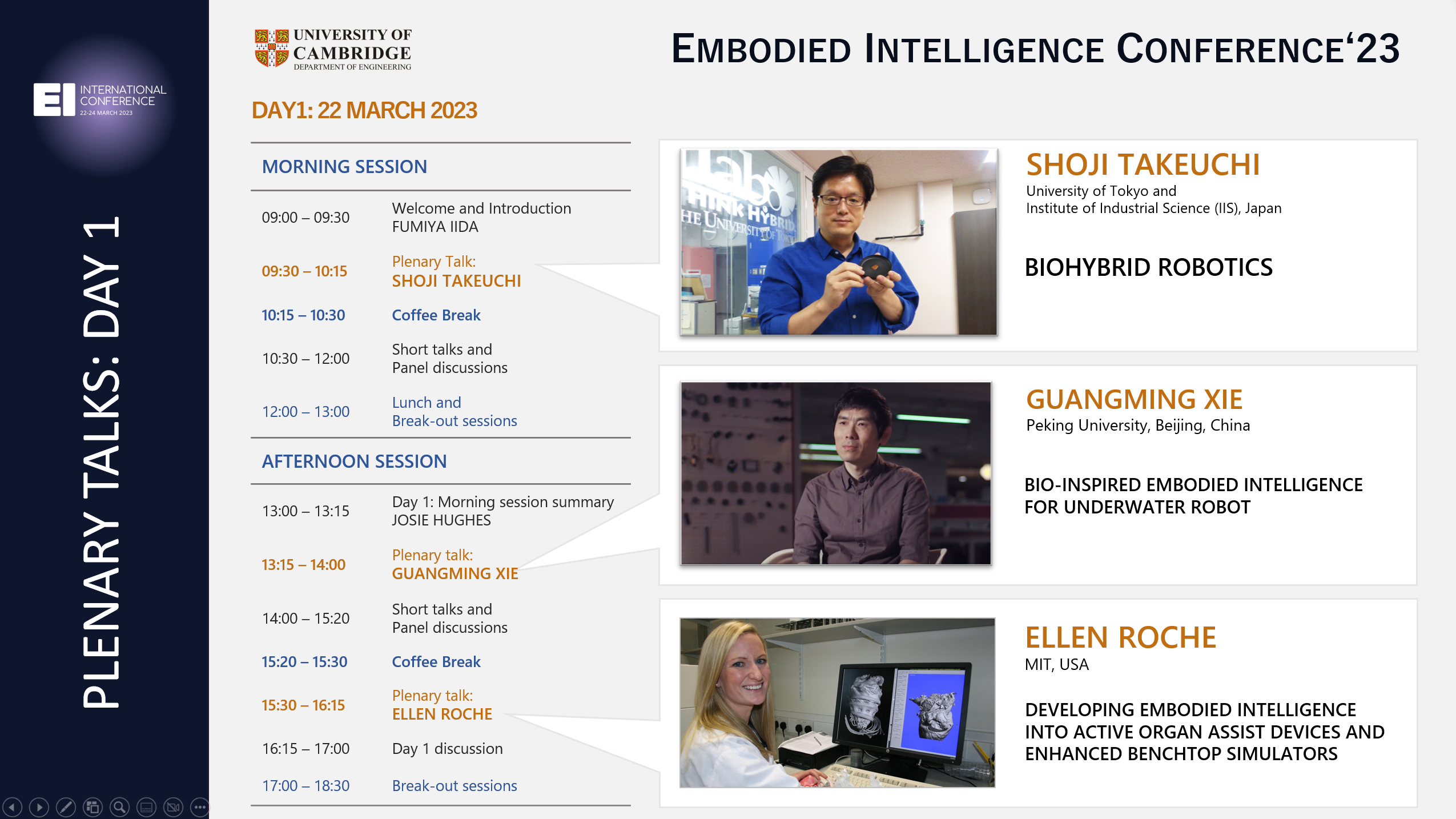

PLENARY TALKS

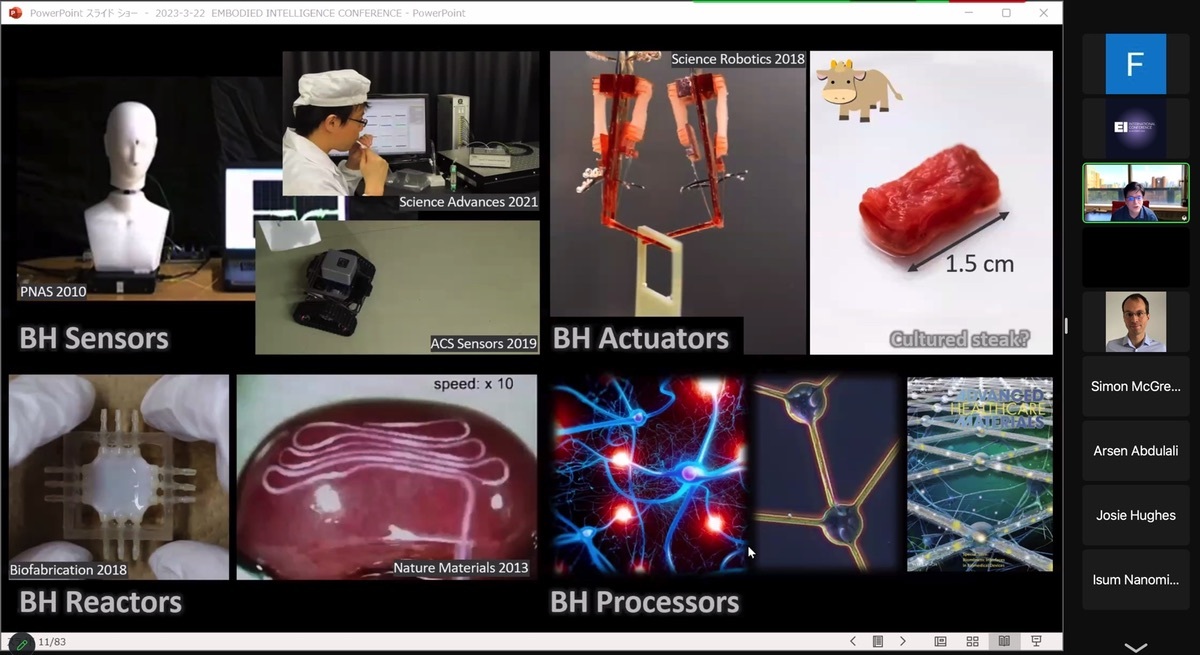

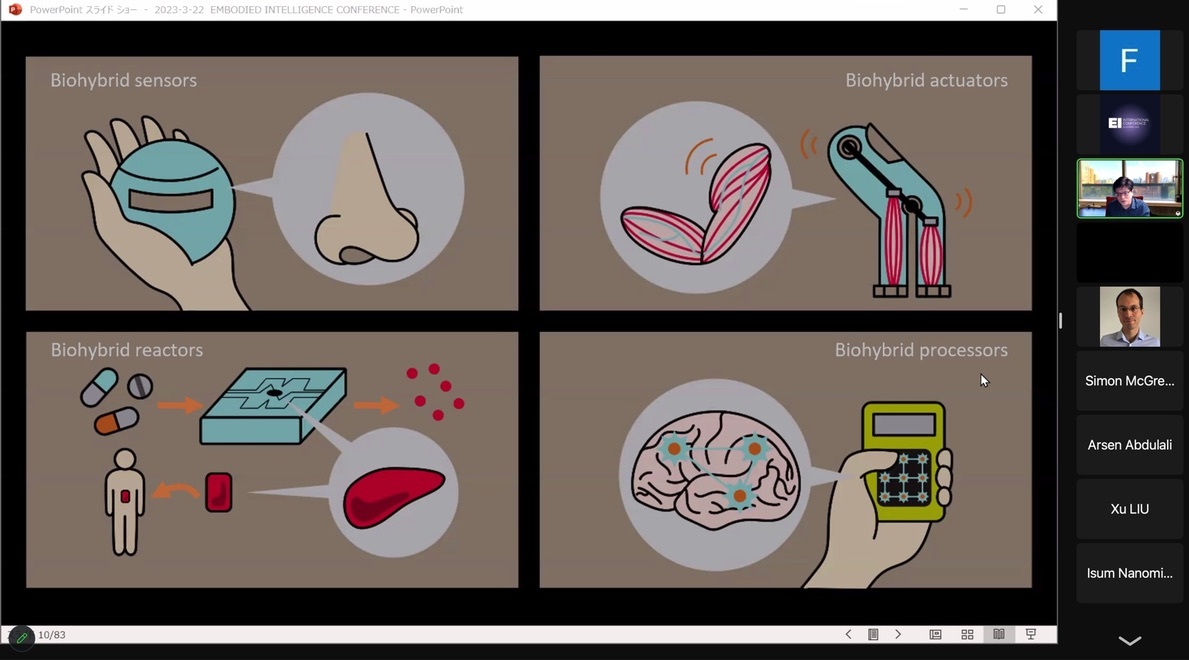

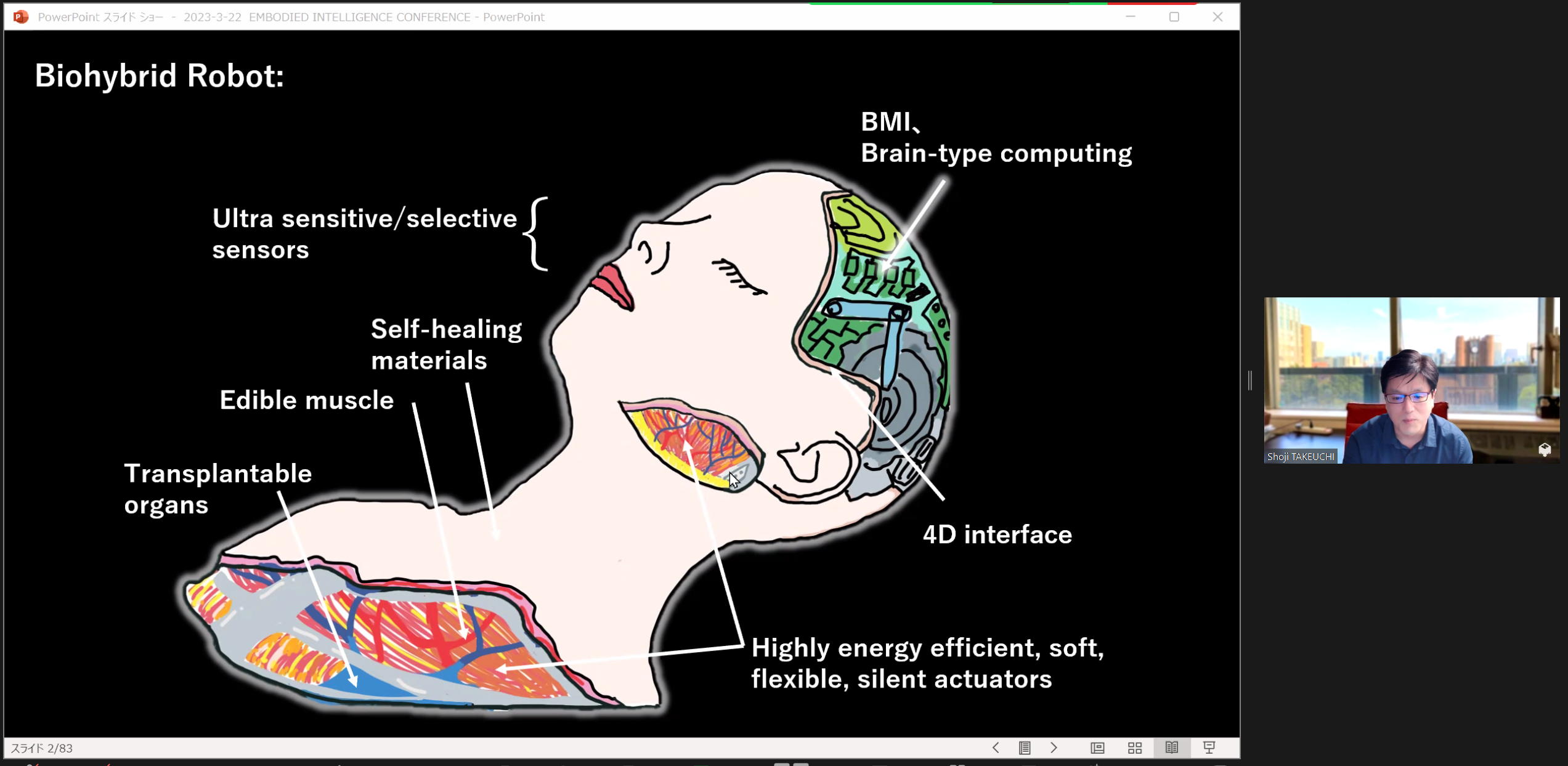

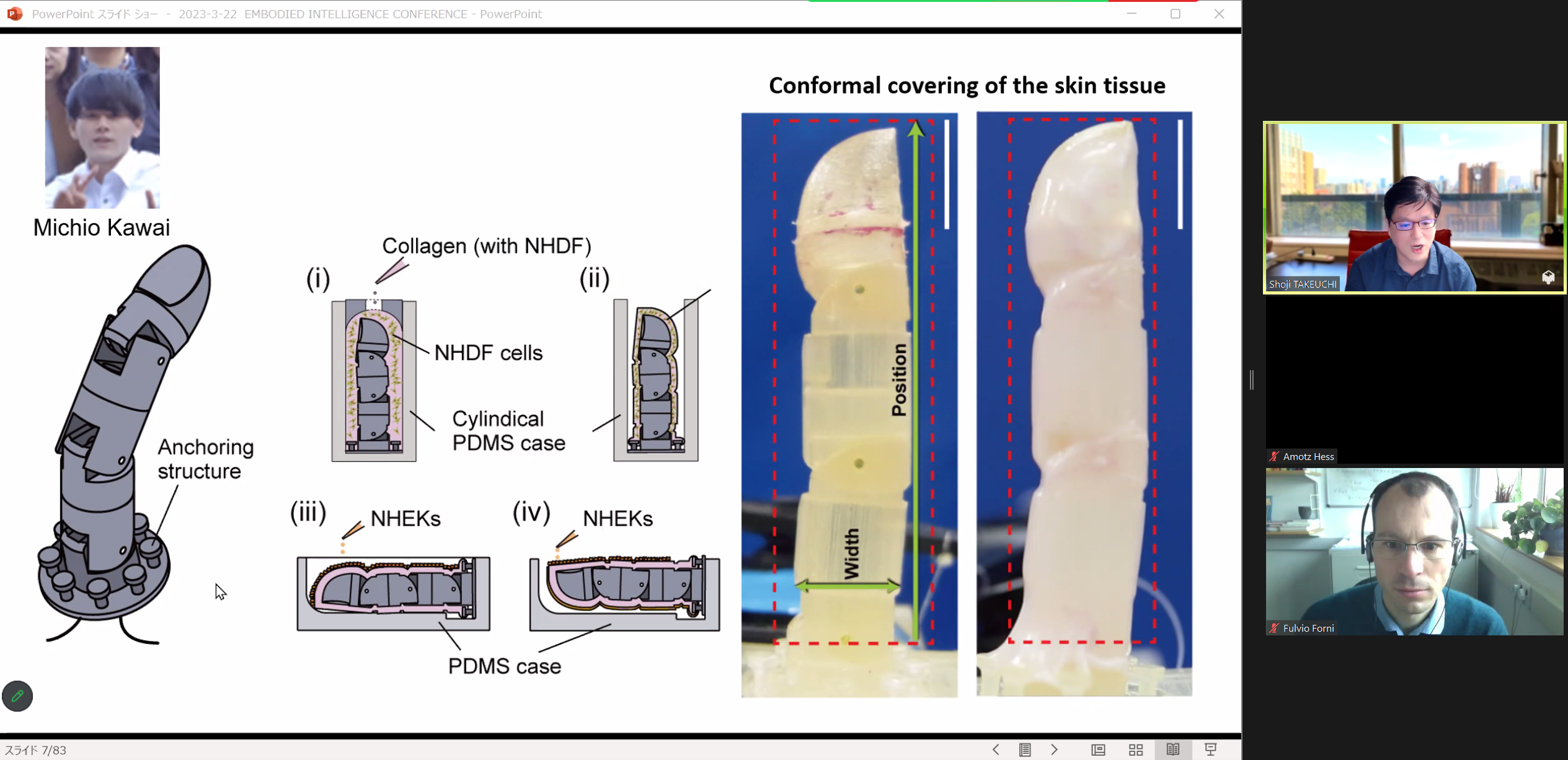

SHOJI TAKEUCHI (University of Tokyo and Institute of Industrial Science, Japan)

VIDEO: BIOHYBRID ROBOTICS

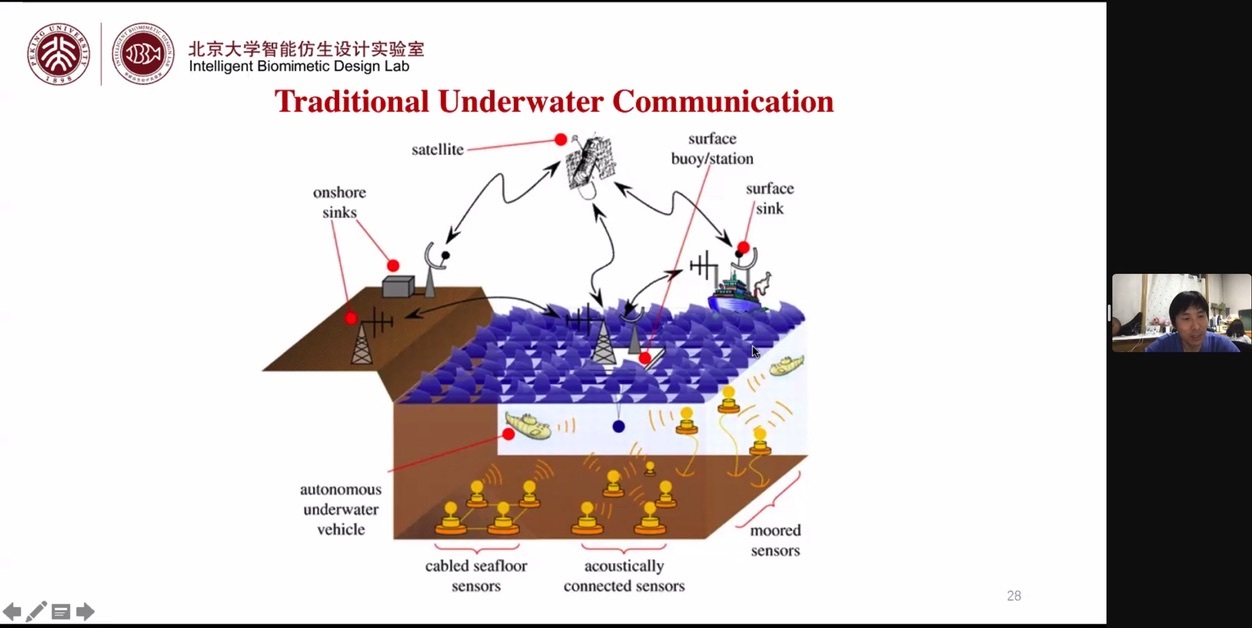

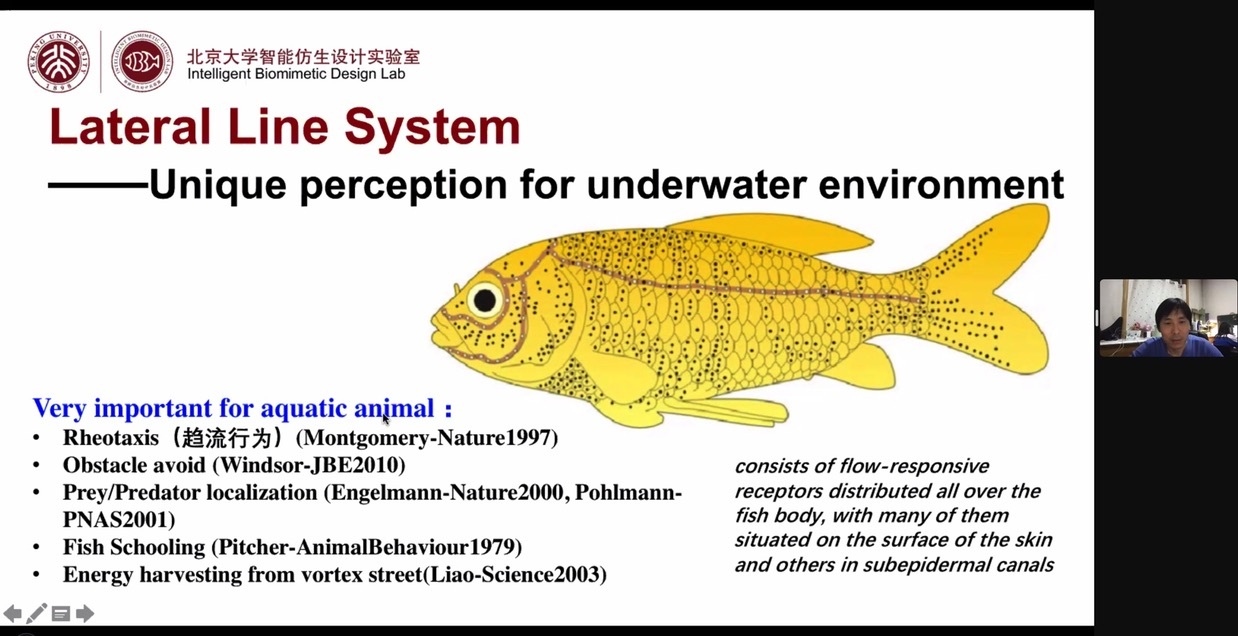

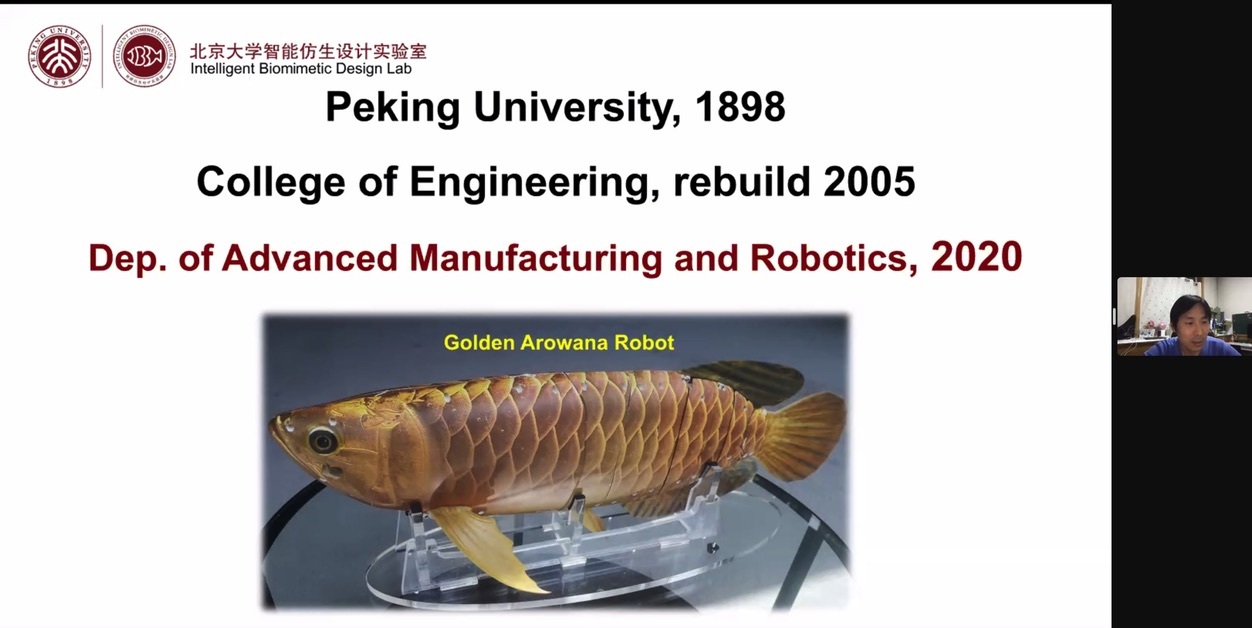

GUANGMING XIE (Peking University, Beijing, China)

VIDEO: BIO-INSPIRED EMBODIED INTELLIGENCE OF UNDERWATER ROBOT

Abstract: Compared to the terrestrial environment, the underwater environment is slightly more hostile and complex, and the development of underwater robots faces many challenges. Inspired by various aquatic organisms, the design and development of bionic underwater robots can effectively improve the intelligent level of underwater unmanned systems. This talk mainly introduces the research progress of our team in the field of underwater bionic robots, including motion, perception, communication, capture, swarm and robot-fish mixed systems.

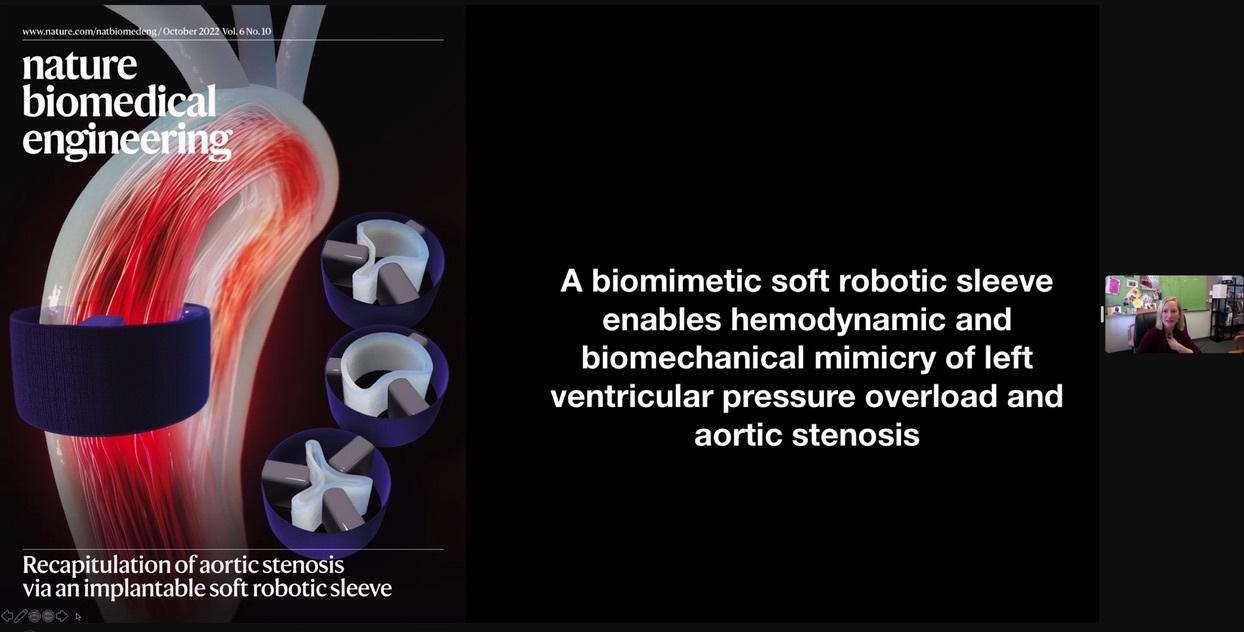

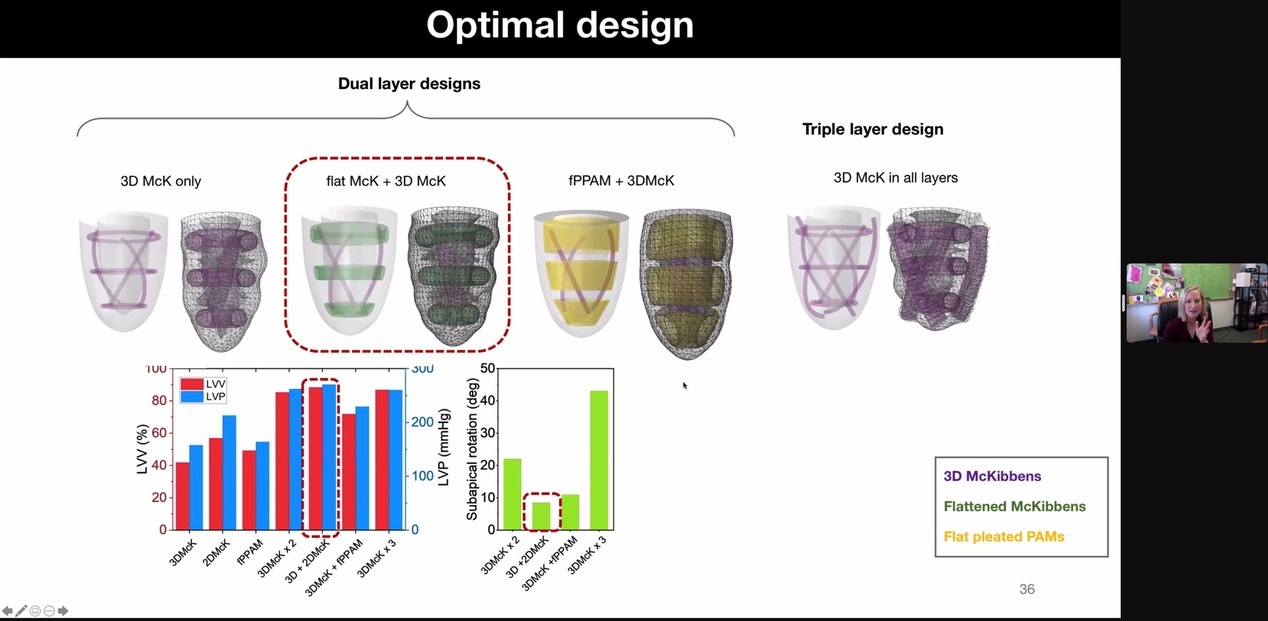

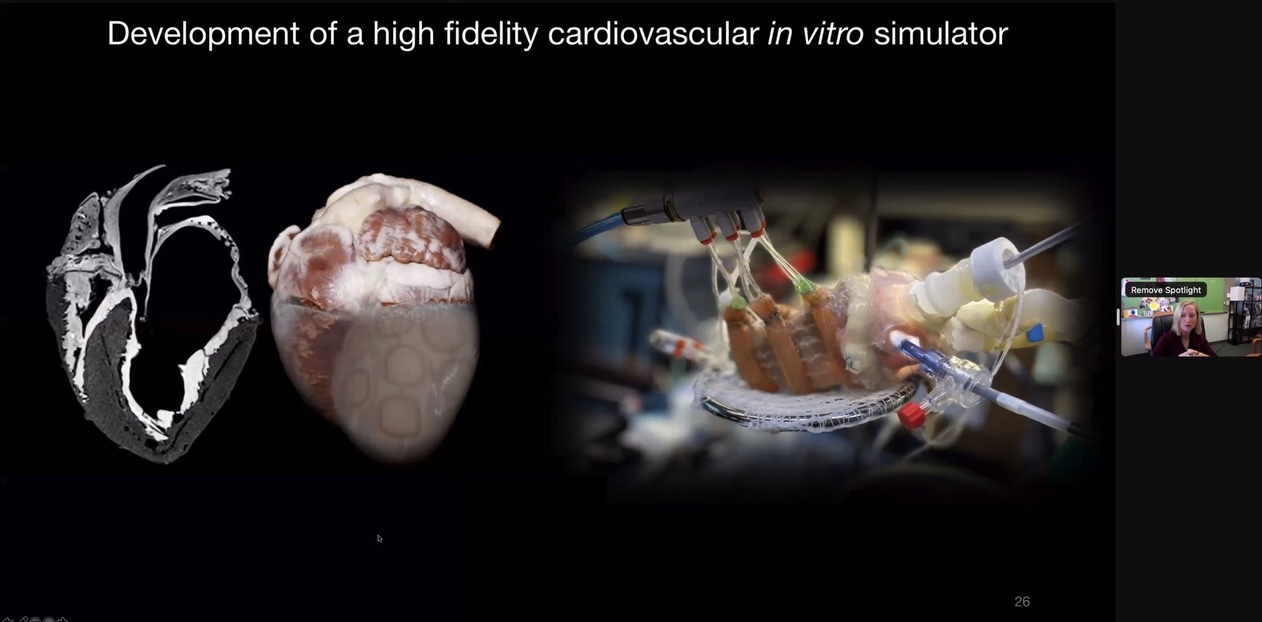

ELLEN ROCHE (MIT, USA)

VIDEO: DEVELOPING EMBODIED INTELLIGENCE INTO ACTIVE ORGAN ASSIST DEVICES AND ENHANCED BENCHTOP SIMULATORS

Abstract: Cyclical dynamic expansion and contraction are essential to the life-sustaining function of organs, exemplified by the heart and lungs. These continuous movements coupled with complex tissue architecture and composite mechanical properties pose considerable challenges to augmenting impaired organ function. My research is providing paradigm-shifting approaches to overcome those challenges, by blending principles of pathophysiology, biomechanics and mechanical engineering with state-of-the-art materials and robotics. In this talk I will speak about two interrelated research streams with inherent embodied intelligence; (i) augmenting the remaining native function in failing organs and biological systems to restore functionality and (iii) developing physiologically realistic in vitro, in vivo, ex vivo and in silico approaches suitable for testing cardiac or pulmonary technologies. I will review my group’s overarching approach to designing these technologies, and how these endeavors have opened up possibilities for further understanding of the biomechanics associated with their targeted organ systems. I will illustrate exemplary work from each research strand with specific vignettes. Finally, I will discuss the potential impact of our work, and how co-designing multimodal simulation models with clinical and industrial partners can not only lead to enhanced implantable device design and testing, but also to further understanding of the fundamental mechanical influencers of pathophysiology and intervention strategies.

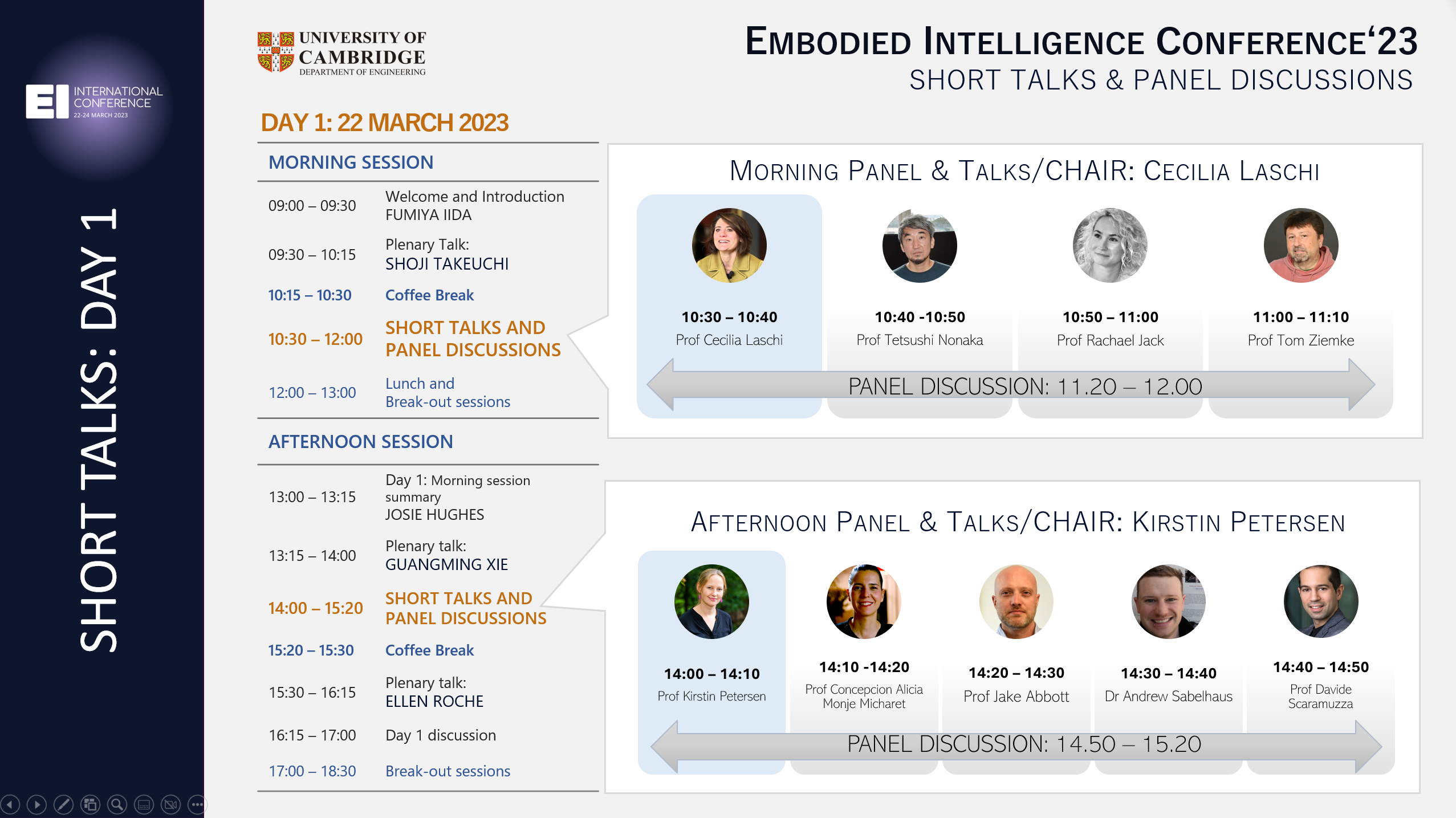

MORNING SHORT TALKS (VIDEOS)

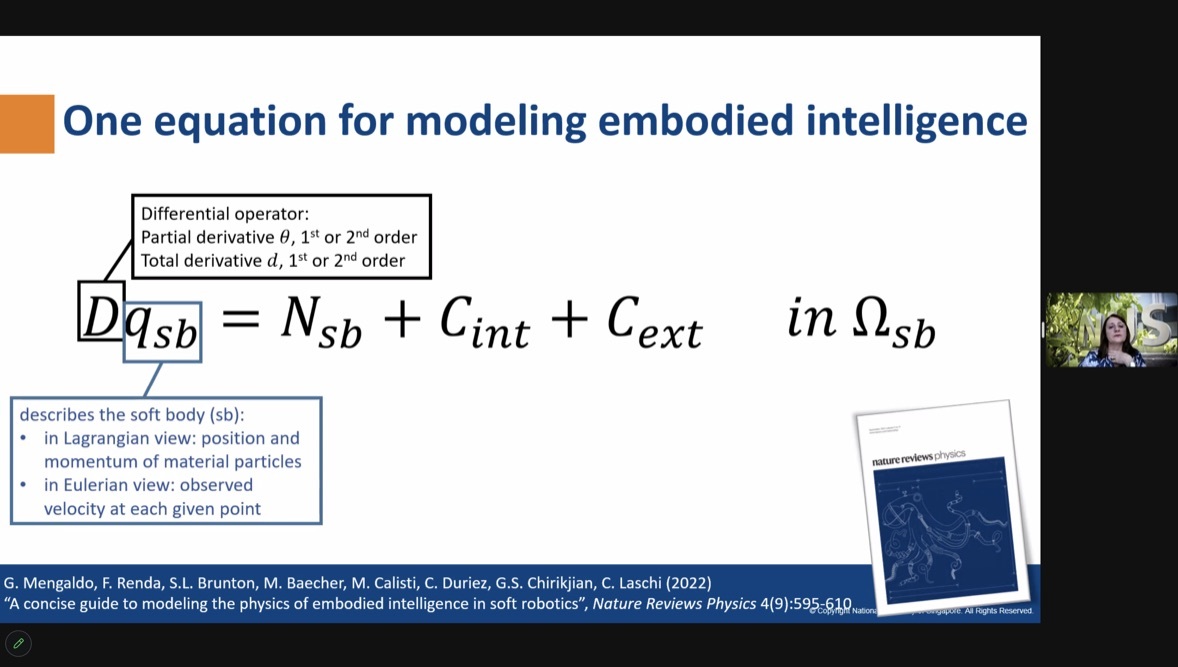

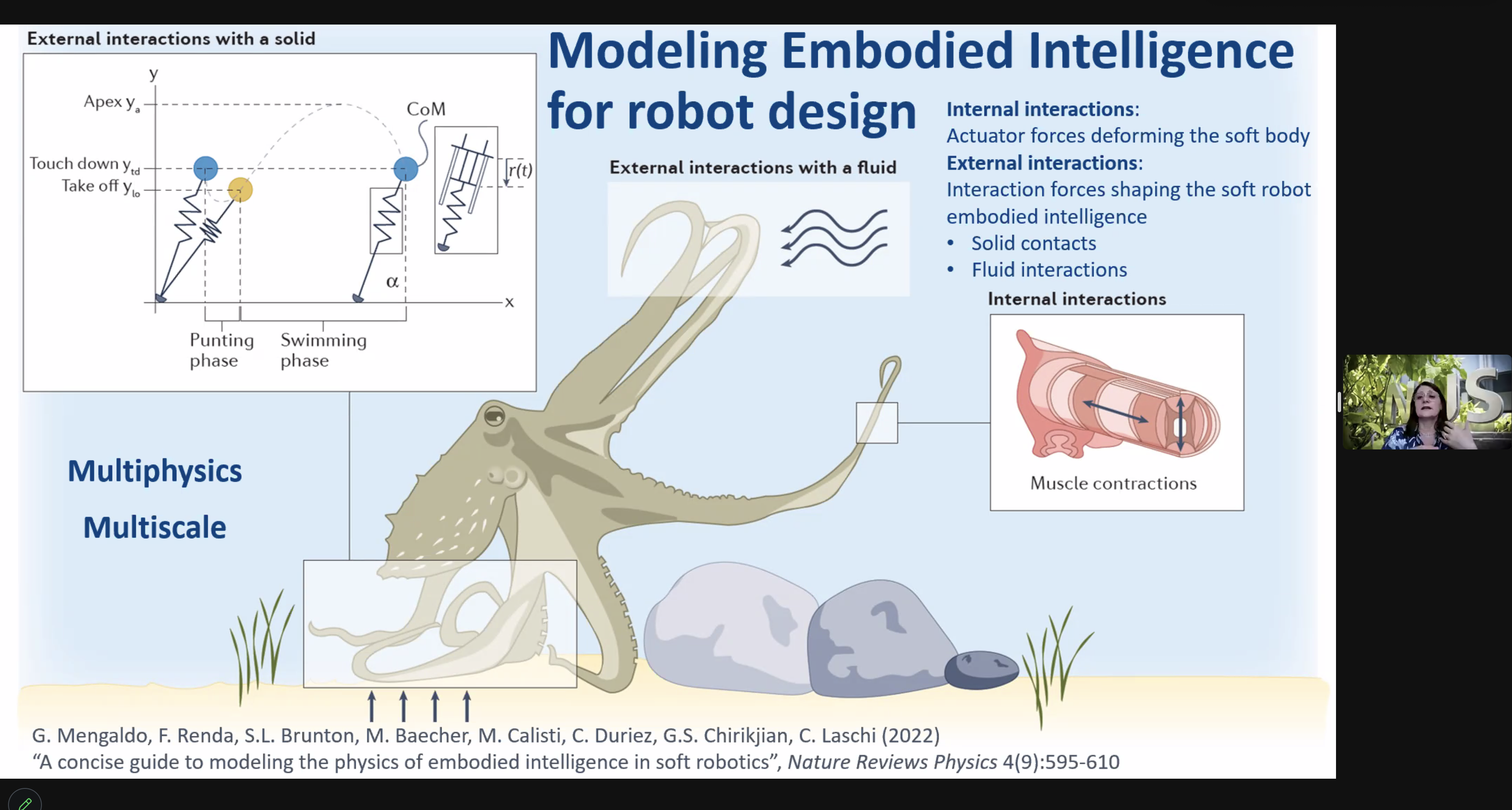

- Cecilia Laschi: Embodied Intelligence and Soft Robotics: from principles to model-informed design

- Abstract: Embodied intelligence is one of the grand lessons that we can take from nature, for improving robot behaviour, which emerges from the interaction with the environment. If control becomes simpler, on one side, the design of soft robots that includes such interplay with the external environment is more complex, on the other side, accounting for morphology, mechanical properties, and interactions. Can we capture embodied intelligence by coupling models of the actuation-induced soft body deformations with those describing external interactions? A shift from the use of embodied intelligence principles to model-informed design in soft robots is needed, and within grasp.

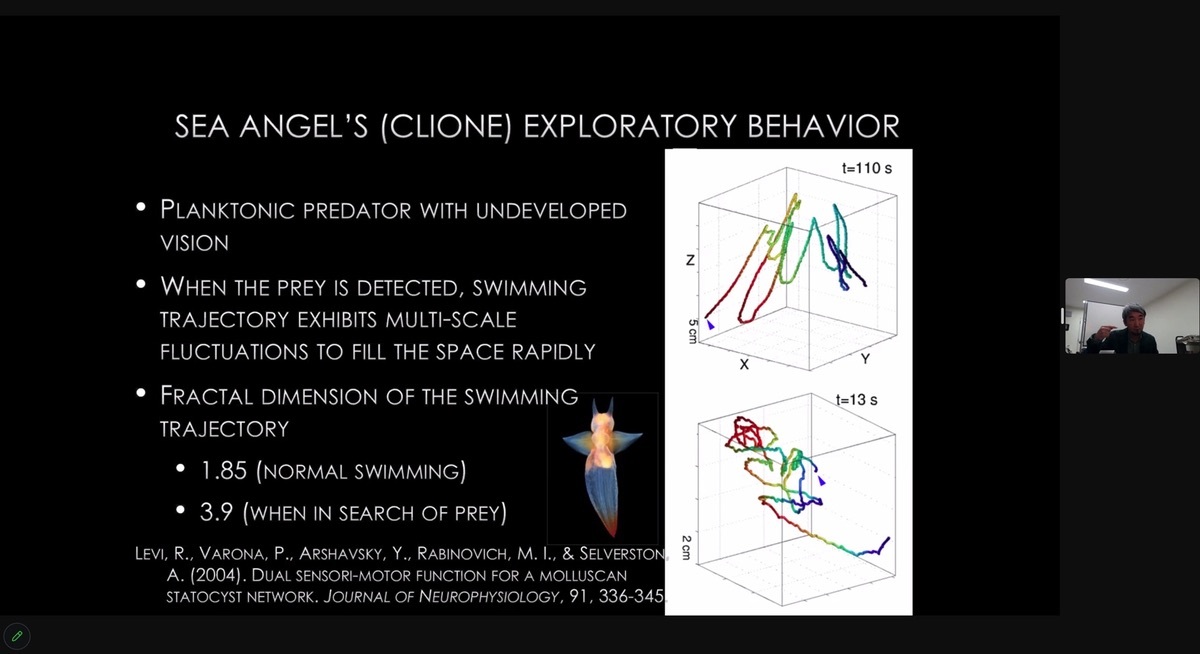

- Tetsushi Nonaka: Reciprocity of agent and environment: Implications for embodied intelligence

- Abstract: Biological agents strive to control encounters with the environment to obtain beneficial encounters while preventing harmful encounters. This control lies in the agent-environment system, which is neither in the agent nor in the environment but in the meeting of the agent and her environment. In this talk, I discuss the issue of what the generative scheme of behavior would be that is open-ended enough to produce variations which fit into different scales of environmental structure on the fly.

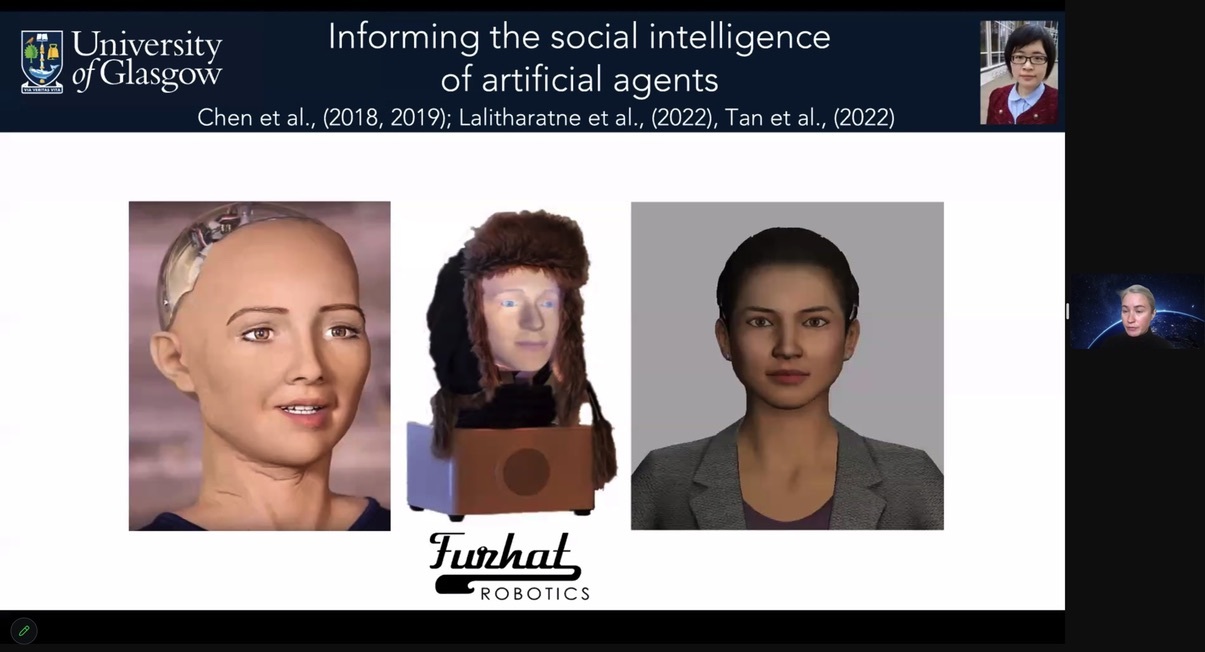

- Rachael Jack: Equipping artificial agents with facia expression intelligence using psychological science

- Abstract: Artificial agents are now increasingly part of human society, destined for schools, hospitals, and homes to perform a variety of tasks. To engage their human users, artificial agents must be equipped with essential social skills such as facial expression communication. However, many artificial agents remain limited in this ability because they are typically equipped with a narrow set of prototypical Western-centric facial expressions of emotion that lack naturalistic dynamics (Jack 2013). Our aim is to address this challenge by equipping artificial agents with a broader repertoire of socially relevant and culturally sensitive facial expressions (e.g., complex emotions, conversational messages, social and personality traits). To this aim, we use new, data-driven and psychology-based methodologies that can reverse-engineer dynamic facial expressions using human cultural perception (Yu, Garrod et al., 2012). We show that our human-user-centered approach can reverse engineer many different, highly recognizable, and human-like dynamic facial expressions that typically outperform the facial expressions of existing artificial agents (Chen, Garrod et al., 2018, Chen et al., 2019). By objectively analyzing these dynamic facial expression models, we can also identify specific latent syntactical signalling structures that can inform the design of generative models for culture-specific and universal social face signalling (Jack, et al., 2016). Together, our results demonstrate the utility of an interdisciplinary approach that applies data-driven, psychology-based methods to inform the social signalling generation capabilities of artificial agents (Jack and Schyns 2017). We anticipate that these methods will broaden the usability and global marketability of artificial agents and highlight the key role that psychology must continue to play in their design.

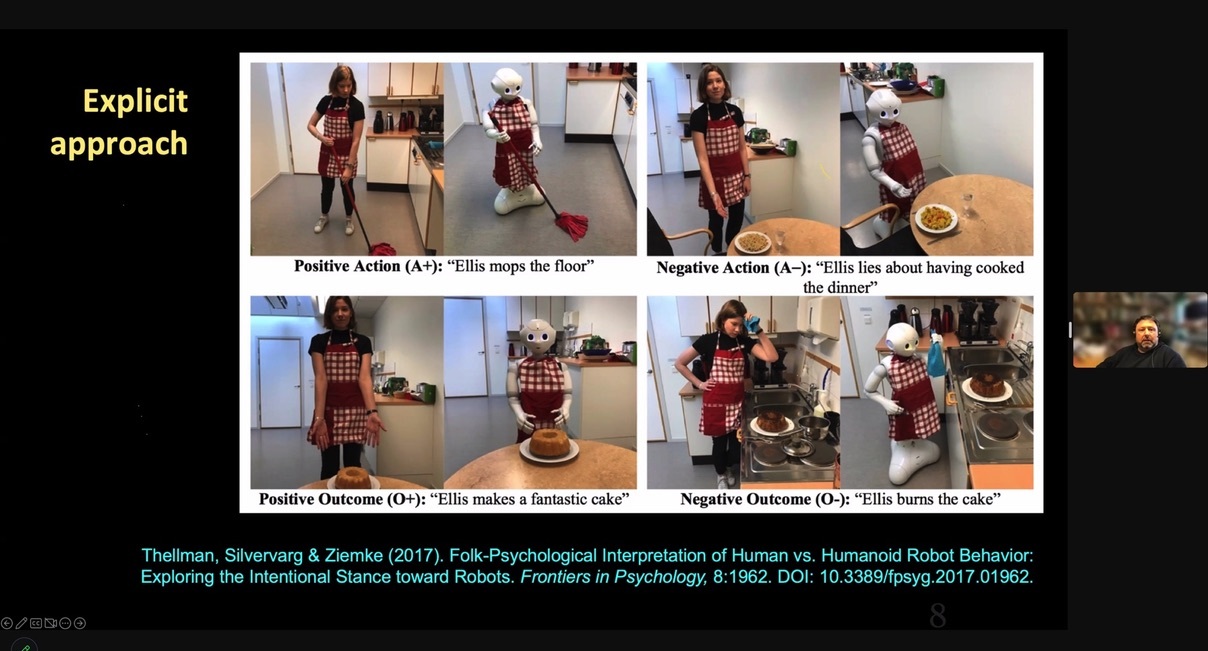

- Tom Ziemke: Social Cognition in Human-Robot Interaction

- Abstract: The talk discusses how people make sense of embodied AI systems in social interactions, in particular how intentional agency is attributed to interpret and predict the behavior of such systems. The talk also addresses what I call the observer’s grounding problem in human-robot interaction and its relation to the ‘old’ symbol grounding problem in embodied AI.

- PANEL DISCUSSION

AFTERNOON SHORT TALKS (VIDEOS)

- Kirstin Petersen: Intelligent Behaviors

- Abstract: I will give a brief overview of representative work in the Collective Embodied Intelligence lab at Cornell, focused on how local interactions between many simple agents can give rise to useful, taskable global behaviors. Within a robot this includes morphological intelligence (i.e. mechanisms where physics instead of sensing and control guide behavior). With many robots, this includes distributed coordination mechanisms combining traditional approaches that rely on explicit computation, planning, and communication networks, with novel approaches that rely on embodied intelligence (i.e. coordination through physical interactions between robots, and/or robots that leverage their environment to store, share, and process information).

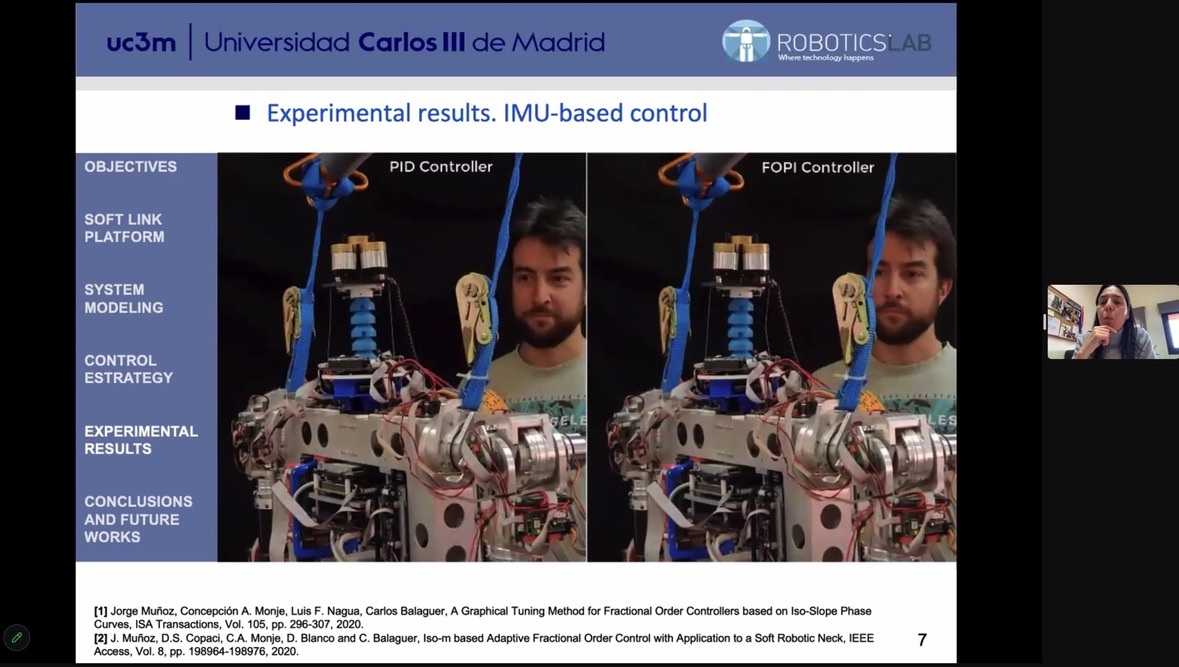

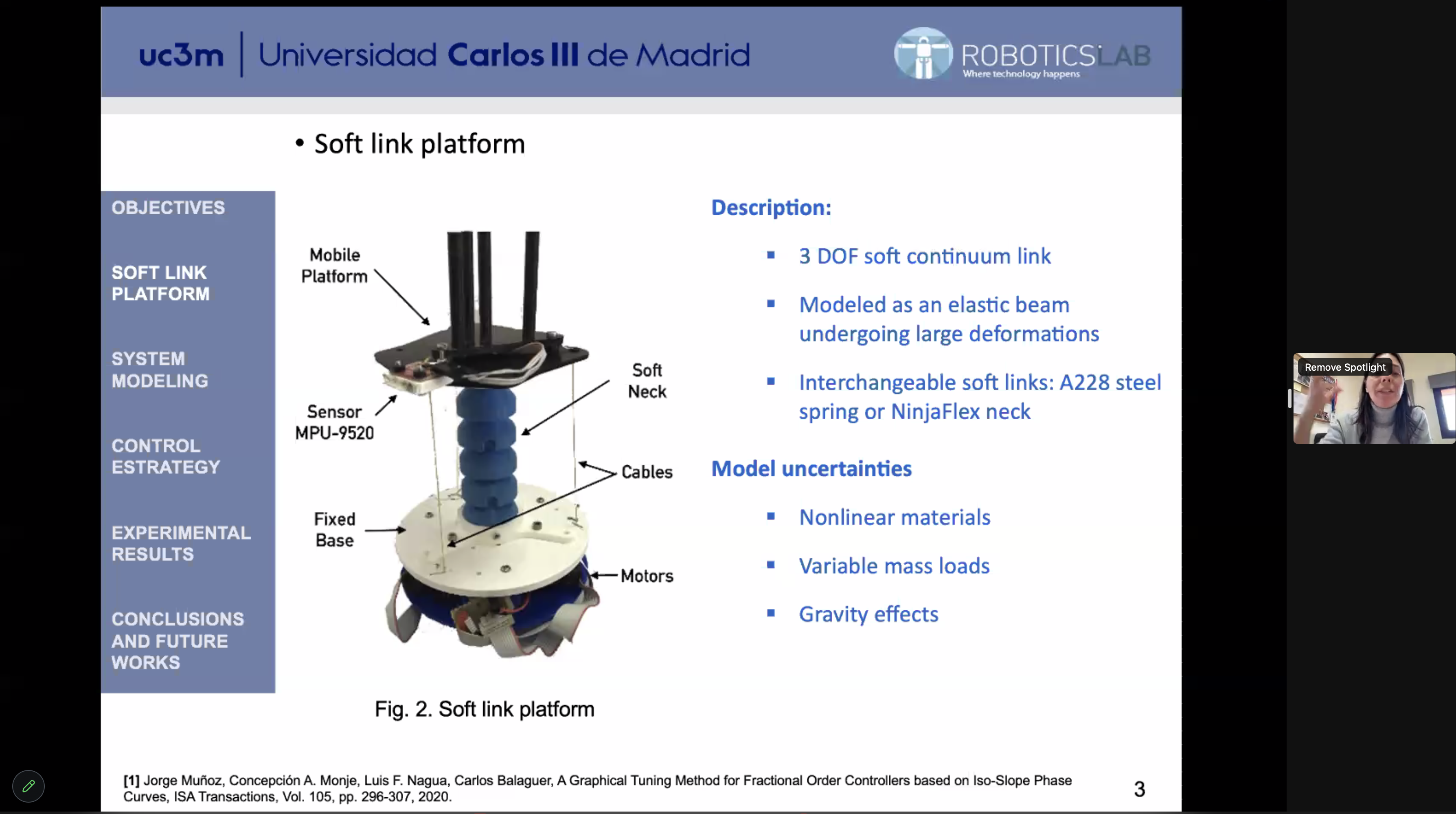

- Concepcion Alicia Monje Micharet: Controlling Soft Robotic Joints with Fractional Order Controls

- Abstract: This talk introduces the design, modeling and control problems related to the development of soft joints to be integrated in robotic platforms. Fractional order control is the type of control proposed to face model mismatches and robustness of the system to different payloads. The results show a robust performance of the real system under different configurations and load conditions.

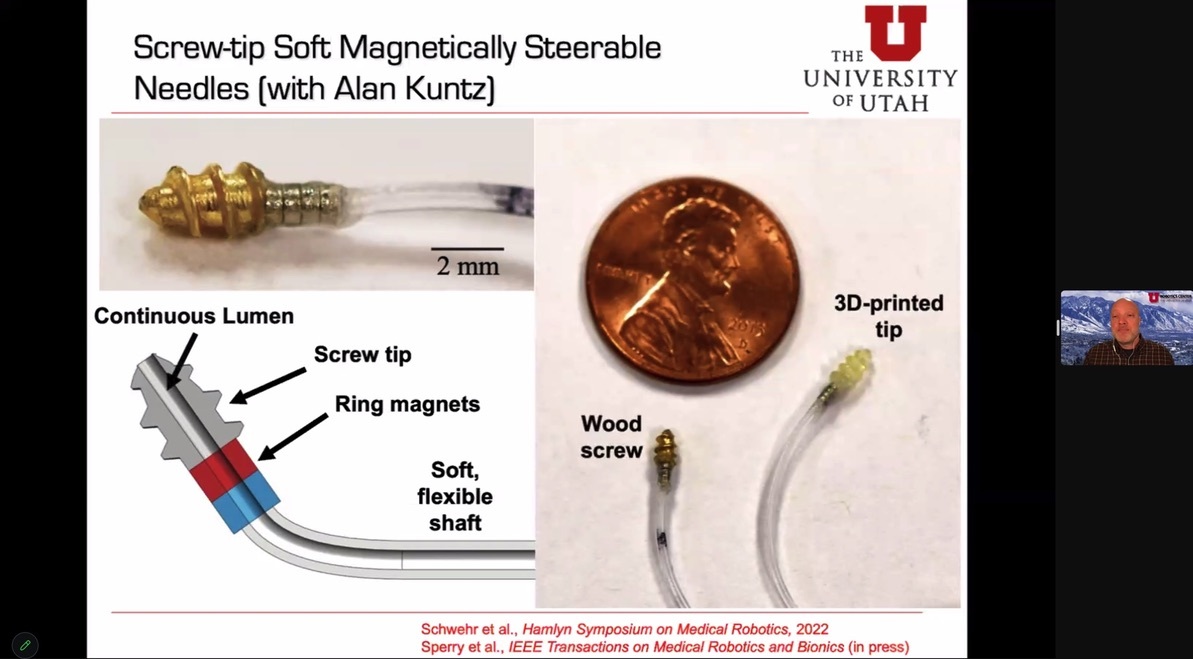

- Jake Abbott: Simple, Soft, Magnetically Actuated Medical Robots

- Abstract: In this talk, I will describe two new medical-robotics design concepts that combine magnetic actuation with soft continuum structures. The first enables catheter- and capsule-like devices that crawl through the natural lumens of the body, such as intestines and blood vessels. The second enables a new type of steerable needle to navigate through soft-tissue environments, such as the brain. The two design concepts share a few features: 1) They reduce the reliance on pushing from the proximal end. 2) They utilize magnetic torque, which scales better than magnetic force for clinical use. 3) They are radially symmetric, such that localization and control in the roll direction is unnecessary. 4) They maintain a working channel to do something useful once in place. 5) The are simple, such that they can be fabricated at a wide range of scales.

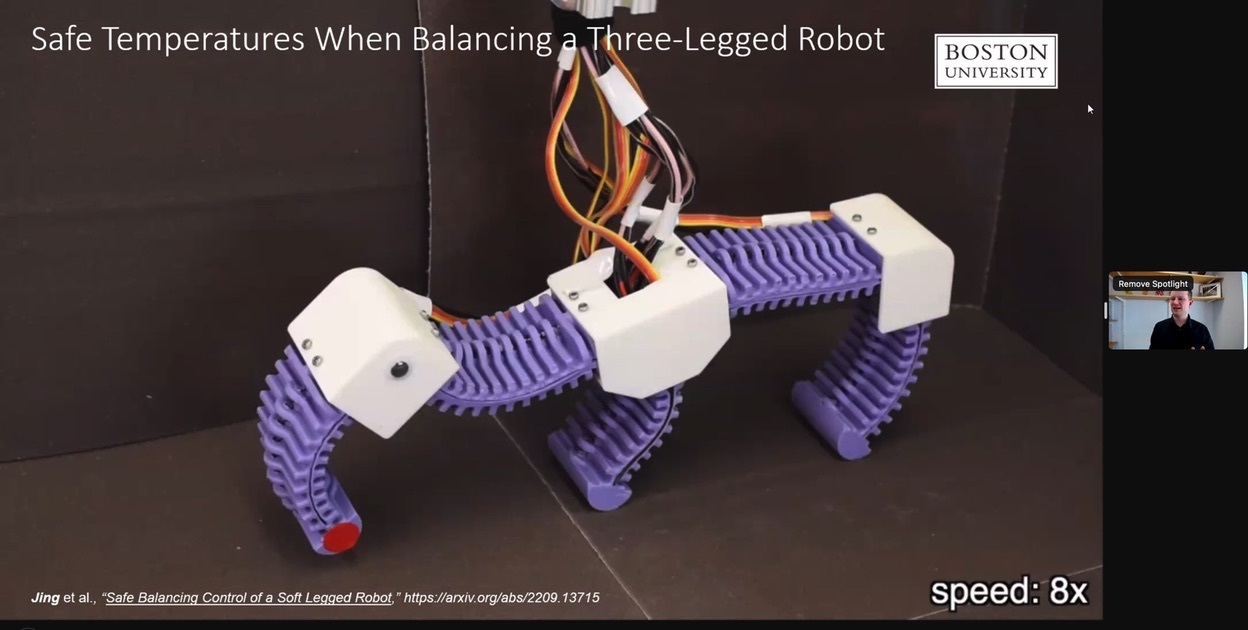

- Andrew Sabelhaus: Challenges in Control and Autonomy for Soft Robots: robustness, scalability and safety

- Abstract: Merging embodied intelligence and artificial intelligence faces many conceptual questions, including the roles for each approach as part of a combined framework. However, current attempts at autonomy for soft robots have been focused mostly on positioning of soft bodies in space, illustrating limitations on real-time computation and knowledge of the robot’s environment. Are we asking the right questions, and working toward the most fruitful control goals? In this talk, I will introduce my work on control systems that do not prioritize perfect tracking of trajectories in the motion of soft robots, but instead show robustness, scalability, and safety. This includes embracing model mismatch and designing controllers that anticipate the simplifications commonly made in dynamics of soft robots. Real-time operation could be addressed by reconceiving control problems as planning problems. And most importantly, focusing on safety verification and invariance may lead to control systems that match our intuitive goals for bringing soft robots out into the world. These three perspectives will be demonstrated in both manipulation and locomotion of soft robots powered by shape memory alloy artificial muscles.

- Davide Scaramuzza: Autonomous, Agile Micro Drones – Learning to Fly

- Abstract: I will summarize our latest research in learning deep sensorimotor policies for agile vision-based quadrotor flight. Learning sensorimotor policies represents a holistic approach that is more resilient to noisy sensory observations and imperfect world models. However, training robust policies requires a large amount of data. I will show that simulation data is enough to train policies that transfer to the real world without fine-tuning. We achieve one-shot sim-to-real transfer through the appropriate abstraction of sensory observations and control commands. I will show that these learned policies enable autonomous quadrotors to fly faster and more robustly than before, using only onboard cameras and computation. Applications include acrobatics, high-speed navigation in the wild, and autonomous drone racing

- PANEL DISCUSSION

CLICK ON IMAGES TO ENLARGE